Imagine a world where machines don’t just process data, but truly see and understand what’s around them. This isn’t science fiction; it’s the exciting reality of computer vision. At its core is a powerful technology called object detection. This field allows computers to identify and pinpoint objects within digital images and videos, transforming how we interact with technology and the world.

For instance, if you’ve wondered how a self-driving car spots a pedestrian, or how your phone automatically tags faces in photos, you’re seeing object detection in action. It’s a complex, yet intuitive, capability; furthermore, it’s quickly reshaping industries and improving our daily lives. This article will take you on a journey through the fascinating world of object detection. We’ll explore its foundations, how it works, its many applications, and the exciting future it promises.

Understanding Object Detection: How AI Interprets Visual Data

At its core, object detection helps machines see the visual world. Think of it as teaching a computer to act like a highly observant detective. Instead of just glancing at a scene, the computer carefully scans it. It then identifies every item of interest, even telling you what each item is. This capability goes far beyond basic image recognition; instead, it’s about detailed and contextual understanding.

When we talk about computer vision, object detection, however, is a key task. This technology allows a smart surveillance camera not just to see a person, but also to identify them as a person and locate them within the frame. This precision, therefore, makes the technology very useful for many real-world situations. Ultimately, it gives machines a much deeper understanding of what they are “looking” at.

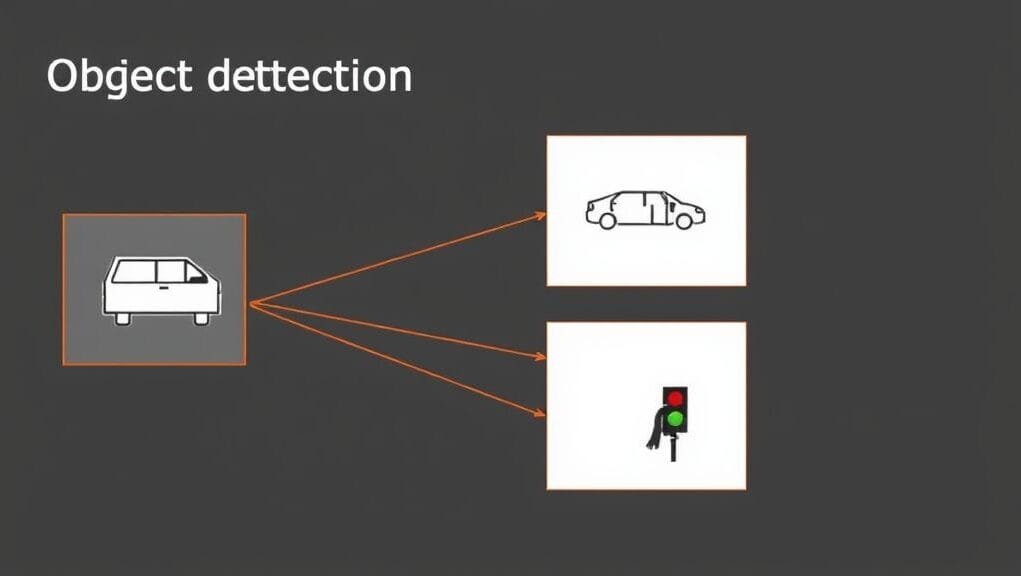

Core Components of Object Detection: Localization and Classification

Object detection isn’t just one task. Instead, it’s a powerful combination of two different, but equally important, sub-tasks. Understanding these two parts is key to seeing everything object detection can do.

First, there’s object localization. Here, the machine acts like an artist sketching an outline. It draws a precise bounding box around each object it finds in an image or video. This box shows the exact location and size of the object. This process thereby gives a spatial understanding that a simple label alone cannot provide. It’s like saying, “Here is where the car is, and this is its precise shape within the image.”

Second, we have object classification. Once an object has a bounding box, the system then gives it a label. This is where the machine figures out what the detected object is. For example, it might label an object as a “cat,” “traffic light,” or “bottle.” Together, localization and classification give us a complete picture: “Here is a bounding box around what we confidently identify as a bicycle.”

Comparing Vision Tasks

To really understand object detection, it helps to see how it differs from other common computer vision tasks. While these fields are related, they have different purposes and offer different levels of detail.

Image classification, for example, is much simpler. If you show an image classifier a picture of a dog, it will simply tell you, “This image contains a dog.” However, this doesn’t tell you where the dog is, nor does it say how many dogs there are. Moreover, it doesn’t indicate what else might be in the picture; instead, it gives one main label for the whole image.

Image segmentation, on the other hand, gives a more detailed view than object detection. Rather than just drawing a bounding box, segmentation techniques provide a pixel-level outline of each object. This means every single pixel belonging to an object is marked exactly. Consequently, this offers a very detailed shape. While more exact, it’s also more computationally intensive. Therefore, it’s often not needed for many uses where a bounding box is enough.

Ultimately, object detection smartly sits in the middle. It offers a practical balance of exactness and speed. It gives enough detail to find and identify objects, but it does so without the huge computer demands of pixel-level segmentation. This makes it a very flexible tool for many real-world problems.

The Evolution of Object Detection: From Rules to Deep Learning

The journey of object detection has been nothing short of remarkable. What began with clever, rule-based systems has, indeed, grown into a complex field powered by artificial intelligence, especially deep learning. Understanding this change helps us see how complex and powerful today’s systems are.

Early computer vision researchers faced a huge challenge: how do you teach a computer to recognize something as changeable as a human face or a car? Such objects can appear in many forms, angles, and lighting conditions. Initially, solutions relied on carefully designed algorithms that looked for specific features.

Early Vision Systems: Handcrafted Features

In the early days of object detection, researchers carefully designed algorithms to find specific visual cues, often referred to as “handcrafted features.” Think of it like a human trying to identify a face by looking for two eyes, a nose, and a mouth in a specific arrangement. Similarly, machines would look for such patterns.

One notable example is the Viola-Jones framework, which became famous for real-time face detection in the early 2000s. This framework used a cascade of simple, box-like features to quickly identify facial patterns. For instance, another technique, Histogram of Oriented Gradients (HOG), described the shape of objects by looking at how the intensity of pixels changed direction. While clever for their time, these methods, however, often struggled with changes in lighting, pose, and size. Moreover, experts had to adjust them a lot by hand. They were like skilled artisans, each creating a specific tool for a specific job.

Deep Learning’s Impact on Object Detection: The CNN Era

The field of object detection, and much of AI, changed a lot with the advent of deep learning. This part of machine learning uses neural networks with many layers (hence “deep”) to learn patterns directly from huge amounts of data. Consequently, the game-changer for computer vision was the Convolutional Neural Network (CNN).

CNNs are especially good at processing visual information. These networks learn to identify features automatically, ranging from simple edges and textures in early layers to complex shapes and object parts in deeper layers. Instead of programmers telling the computer what to look for, CNNs learn how to look for it, discovering the most distinct features on their own. This new approach no longer needed handcrafted features. This thereby led to much better accuracy and strength in object detection. In essence, it was like moving from teaching a specific skill to giving the machine a brain that could learn any visual skill.

Object Detection Architectures: Strategies for Visual Understanding

Deep learning drives the field, and various designs have emerged. Each has its own strengths and weaknesses. These models, therefore, show different ways to handle the two challenges of localization and classification. We can group them into two main types, along with some new, advanced developments.

Two-Stage Object Detectors: Achieving High Precision

Imagine a careful detective who first looks at a scene to find all possible areas of interest, and then examines each area more closely to confirm what’s there. This is the essence of two-stage detectors. These models break the object detection process into two separate phases.

In the first stage, the model proposes a set of “regions of interest” (RoIs). These are basically smart guesses about where objects might be. Such suggestions are made without yet knowing what the objects are. Tools like selective search or, more recently, a Region Proposal Network (RPN) within a CNN, are used for this.

In the second stage, each suggested RoI is then processed. This step classifies the object inside it and improves the bounding box’s position. This two-step approach, overall, allows for very high accuracy because the model can focus closely on each possible object. The most famous family of two-stage detectors is the R-CNN family, including:

- R-CNN (Region-based CNN): The pioneer, but slow due to processing each region separately.

- Fast R-CNN: Improved speed by processing the entire image once and then projecting region proposals onto feature maps.

- Faster R-CNN: It made the process even faster by putting the region proposal network right into the neural network, making it trainable from start to finish.

- Mask R-CNN: Extended Faster R-CNN to perform pixel-level instance segmentation in addition to detection.

While very accurate, two-stage methods, however, are generally slower because they work in steps. This consequently makes them less suitable for real-time uses where speed is most important.

One-Stage Object Detectors: Enabling Real-Time Performance

Now, imagine a very fast detective who can scan a scene and immediately find and group everything in one quick look. That’s the power of one-stage detectors. These models predict bounding boxes and class probabilities directly in one pass of the network. There’s no separate step to suggest regions; instead, everything happens at the same time.

This direct approach greatly boosts speed. This thus makes one-stage detectors perfect for uses that need real-time performance, such as autonomous driving or live video surveillance. The trade-off, in the past, has been slightly less accuracy compared to two-stage models. Nevertheless, this gap is continuously narrowing with newer models.

Key examples of one-stage detectors include:

- YOLO (You Only Look Once): As its name suggests, YOLO processes the entire image just one time. It divides the image into a grid and predicts bounding boxes and probabilities for each grid cell. The YOLO family has seen many versions (YOLOv1, YOLOv2, …, YOLOv10, YOLOv11), with each new iteration pushing the limits of speed and accuracy.

- SSD (Single Shot Detector): SSD also predicts objects in a single forward pass, using feature maps from different scales to detect objects of varying sizes.

Overall, one-stage detectors are often the best choice when processing speed is very important, as they deliver strong performance in changing environments.

Vision with Transformers

While CNNs have led computer vision for years, a new design, however, is becoming very popular: Transformers. Transformers were first made for natural language processing, where they excel at understanding context and how different parts of data relate. Researchers have since adapted them for vision tasks, consequently opening up exciting new possibilities.

Models like DETR (Detection Transformer) combine CNNs with transformer models. Here, the CNN finds features. The Transformer then processes these features to directly predict a set of objects, doing so without anchors or non-maximum suppression (techniques usually used in CNN-based detectors). This simpler approach is a major change in design.

Even more extreme are Vision Transformers (ViTs). These completely skip convolutions, treating images as sequences of small patches, much like words in a sentence. They then use self-attention to understand how these patches relate to each other. While still a busy area of research, Transformers, nevertheless, are showing good results for both image classification and object detection. They could offer new levels of accuracy and speed, and furthermore, especially for understanding the whole image’s context.

Comparing Detection Architectures

Choosing the right object detection design ultimately often comes down to a basic trade-off between speed and accuracy.

| Feature | Two-Stage Detectors (e.g., Faster R-CNN) | One-Stage Detectors (e.g., YOLO, SSD) | Transformer-based (e.g., DETR, ViT for detection) |

|---|---|---|---|

| Accuracy | Generally higher, especially for small/dense objects | Good, but historically slightly lower than two-stage | Promising, catching up, good at global context |

| Speed | Slower, multiple inference steps | Faster, real-time performance | Can be computationally intensive, but active research aims for speed |

| Complexity | More complex, region proposal + classification | Simpler, end-to-end prediction | Complex, self-attention mechanisms |

| Best for | High-precision tasks, static analysis, benchmarks | Real-time applications, embedded systems, video | Future-proof, novel applications, strong contextual understanding |

As you can see, each approach indeed offers clear benefits. Therefore, the best choice depends a lot on your specific needs and limits.

Real-World Applications of Object Detection

Machines can “see” and “understand” objects. This has opened up many uses across almost every field. Object detection isn’t just a theory; instead, it’s a practical powerhouse that drives new ideas and solves complex problems in the real world. Let’s look at some of the most important areas.

Automotive Object Detection: Powering Autonomous Driving

Object detection has one of its most clear and vital uses in autonomous vehicles and Advanced Driver-Assistance Systems (ADAS). Imagine a car driving itself; it must always know what’s around it. Object detection is therefore the digital “eyes” of these vehicles.

It allows self-driving cars to:

- Identify pedestrians and cyclists: This is crucial for safety and preventing accidents.

- Detect other vehicles: It understands their position, speed, and direction.

- Recognize traffic signs and signals: This ensures they follow traffic laws.

- Spot obstacles: This includes construction cones or fallen debris, enabling them to navigate safely.

Without very accurate and real-time object detection, self-driving would consequently be impossible. The difference between a safe trip and a dangerous situation often depends on how accurate these systems are in a split second.

Vision AI in Healthcare

In medicine, object detection is proving to be a game-changing tool. It helps doctors and can, moreover, save lives. It offers a fair, tireless “second opinion” that can boost human expertise.

Applications include:

- Tumor detection: It finds small differences in MRI, CT, or X-ray scans that might be missed by the human eye, and it helps radiologists confirm their suspicions.

- Disease diagnosis: Detecting specific markers for various diseases, such as diabetic retinopathy in retinal scans or signs of pneumonia in chest X-rays.

- Surgical assistance: Guiding robotic surgery by identifying organs, tissues, and surgical instruments.

Importantly, object detection models are more and more matching, and sometimes even beating, the accuracy of human radiologists in certain diagnosis tasks. This speeds up diagnosis and, furthermore, leads to better patient results.

Object Detection in Security and Surveillance

Object detection is changing security and surveillance systems in a basic way. Beyond just recording, these systems can, in fact, now actively watch and warn people about specific events.

Its uses include:

- Monitoring vehicle flow: Tracking traffic patterns, detecting congestion, or identifying improperly parked vehicles.

- Detecting unauthorized activities: Recognizing trespassing, shoplifting, or suspicious behavior in public or private spaces.

- Tracking individuals: Following the movement of specific people through a monitored area for security purposes.

- Identifying suspicious objects: Flagging abandoned bags or unusual items in sensitive locations.

This active monitoring consequently improves safety and efficiency. It indeed lets security staff focus on real threats rather than watching endless hours of recordings.

Retail and Inventory Management with Object Detection

The retail sector is extensively using object detection. This helps make operations smoother, improves customer experience, and gives better insights into how customers act.

Consider these applications:

- Automated checkout experiences: Systems like Amazon Go use object detection to identify items customers pick up, thereby allowing them to simply walk out without normal checkout lines.

- Inventory management: Automatically tracking product quantities on shelves, alerting staff when restocking is needed, and reducing manual inventory checks.

- Analyzing customer behavior: Understanding how customers navigate stores, which products they interact with, and optimizing store layouts.

- Quality control: Identifying defective products on an assembly line.

These abilities therefore lead to lower costs, better efficiency, and a smoother shopping experience for customers.

Precision Farming with Computer Vision

Even farming, a traditionally low-tech industry, is, however, being changed by object detection. Drones and robotic systems are becoming the new farmhands, offering accuracy and efficiency never before possible.

Applications include:

- Pest and disease detection: Drones with cameras and object detection scan crops, looking for early signs of bugs or lack of nutrients.

- Yield estimation: Counting fruits or vegetables on plants to predict harvest sizes.

- Weed identification: It tells weeds apart from crops, which allows for specific herbicide use and less chemical usage.

- Livestock monitoring: Tracking individual animals, monitoring their health, and identifying those in distress.

This “precision agriculture,” in turn, cuts down on waste. It makes the best use of resources and helps farmers get higher yields in a greener way.

Sports Analytics: Leveraging Object Detection

In the world of sports, for example, object detection is moving beyond simple replays. It offers deep insights that can improve training, strategy, and even fan interest.

It can:

- Track players and objects: Accurately follow the movement of every player on a field, as well as the ball (football, basketball, etc.).

- Analyze performance: Generate statistics on player speed, distance covered, shot trajectories, and tactical formations.

- Automated officiating: It could help make fair calls in sports.

This level of detail gives coaches very useful data to improve strategies. Furthermore, it helps broadcasters offer richer and more helpful viewing experiences.

Everyday Magic: Consumer and Robotics Applications

Beyond these big industrial uses, object detection also powers many daily comforts and future visions we see.

- Real-time filters on social media: Snapchat and Instagram filters that place virtual glasses or animal ears on your face use object detection to locate your facial features.

- Automatic tagging in photo management tools: Identifying friends and family in your photo library and suggesting tags.

- Robotics and automation: Object detection is vital for robots to understand what’s around them, helping them move through complex places, pick up specific items, and safely interact with objects and people. Whether it’s a factory robot or a robotic vacuum cleaner, object detection is key to its awareness and operational understanding.

The Hurdles Ahead: Challenges in Object Detection

Despite the huge progress, object detection, however, still faces challenges. The real world is messy and cannot be fully predicted. This thus creates big challenges even for the most advanced AI models. Solving these problems is a constant focus for researchers and engineers.

Handling Occlusion in Visual AI

One of the toughest challenges is occlusion. This happens when parts of an object are hidden by other objects. This consequently makes it hard for the model to see the full picture. Imagine a car partially hidden behind a tree, or a person standing behind another person in a crowd.

When an object is occluded, the model receives incomplete visual information. This can lead to:

- Reduced feature visibility: Important characteristics of the object are missing.

- Ambiguity: It becomes hard to tell what the object is or where it fully ends.

- Information loss: Crucial details needed for accurate classification and localization are simply not available.

Therefore, strong object detection systems need to guess the presence and shape of objects. They must do this even when only a small part of them is visible. This often needs complex understanding of the situation and, additionally, advanced computer programs that can put together broken clues.

Scale and Pose Variation Challenges

Objects in the real world don’t always appear neatly in the center of an image, nor are they always perfectly upright or at a steady size. Instead, they can appear:

- At very different sizes: A car seen far away is tiny, while the same car up close fills the frame.

- At various distances: Similar to size, distance changes how an object appears.

- In different orientations (pose variations): For instance, a person can be standing, sitting, lying down, or viewed from the front, side, or back.

Teaching a model to always detect the same object despite these huge changes in size, distance, and angle is very hard. The model therefore needs to learn features that don’t change with size or rotation. This means it perceives the object no matter its size or angle, which needs varied training data and, furthermore, clever design styles that can handle these changes.

Real-Time Object Detection: Balancing Speed and Accuracy

For many important uses, object detection consequently isn’t helpful if it can’t run in real-time. This means processing images or video frames fast enough to keep up with the incoming data stream, often at 20-60 frames per second or more.

The challenge lies in balancing accuracy with speed. Very accurate models often need more complex math, and this slows them down. Conversely, very fast models might sacrifice some exactness. Finding the right balance is key for real-world use. For example, a self-driving car needs to identify a suddenly appearing obstacle in milliseconds, not seconds. Ultimately, this demand drives constant innovation, helping develop more efficient model designs and special hardware.

Data Challenges in Object Detection

Deep learning models, especially for object detection, do best with huge amounts of good, labeled data. However, getting such data brings its own problems:

- Limited data: For rare objects or special uses (e.g., finding a rare medical condition), getting enough training examples can be very hard and costly.

- Class imbalance: Some object classes appear much more frequently than others (e.g., cars are more common than bicycles in a traffic dataset). If a model is trained on uneven data, it might favor the more common classes and struggle to find less common objects accurately.

Therefore, to fix these data problems, common methods include data augmentation or special training plans. Data augmentation creates fake versions of existing data. Special strategies, moreover, give more importance to classes that appear less often.

Visual Challenges in Complex Scenes

The real world is rarely a clean, empty place. Objects often appear against complex, cluttered backgrounds. This thus makes it hard for a model to tell the object apart from the surrounding noise. Imagine trying to find a specific bird in a dense forest, or a small item on a crowded supermarket shelf.

Furthermore, changing light conditions (bright sunlight, deep shadows, low light, artificial light) can greatly change how an object looks. This changes its colors, textures, and how its edges are seen. Indeed, a model trained mainly in bright conditions, for example, might have trouble in dimly lit places. Strong detectors must be able to work well across many different lighting situations and backgrounds without getting confused.

Spotting the Tiny Details: Small Object Detection

Finding small objects is a very hard problem. A small object, by its nature, takes up very few pixels in an image. Consequently, there is just less visual information available. Specifically, there are fewer features, less texture, and less shape detail for the model to learn from.

For instance, finding a distant traffic sign or a small drone high in the sky needs excellent visual sharpness from the model. Current designs often struggle because downsampling operations in CNNs (which reduce image size to process features) can cause the sparse information from small objects to be lost completely. Therefore, special techniques, such as using higher-resolution feature maps or designing specific small-object detection heads, are busy areas of research.

Open-World Learning for Visual Systems

Most current object detection models work in a “closed-world” setting. In other words, they are trained to find a set of classes that are already defined (e.g., cars, people, traffic lights). They will struggle to identify anything outside that list.

The challenge of open-world learning is to make models that can slowly learn and find new classes without needing to be fully retrained on the whole dataset every time. This is like how humans constantly learn about new objects throughout their lives. Consequently, this ability is key for truly smart systems that can adapt to ever-changing places and find new objects. It remains a very busy and complex research area.

The Future of Object Detection: Emerging Trends

The field of object detection is always changing. Researchers are indeed always pushing the limits of what’s possible. The future promises even stronger, more accurate, and efficient systems. This thereby will expand their use into new and exciting areas. Let’s look at some important trends shaping this future.

Improving Object Detection: Efficiency and Precision

The search for better models never stops. Ongoing research consequently keeps improving deep learning designs. It focuses on getting higher accuracy with better computer efficiency. This means creating models that are not only more exact in their detections but also run faster and need less processing power.

- Iterations of YOLO: The YOLO family, for instance, continues to evolve rapidly with versions like YOLOv9, YOLOv10, and YOLOv11. These introduce new design improvements, better training plans, and faster inference speeds, directly leading to more reliable and responsive uses.

- Efficient Architectures: New designs aim to reduce the number of parameters and computations while maintaining or even improving performance, thereby making models suitable for wider use.

Edge AI for Visual Perception

As AI becomes more common, there’s consequently a strong push to bring advanced abilities directly to devices at the “edge” of networks. Examples include smartphones, drones, IoT devices, and systems in cars. This is Edge AI integration.

The goal is to make object detection models work best on hardware with limited computer power, memory, and battery life. This reduces delay (no need to send data to a cloud server and wait for a response). Furthermore, it improves privacy (data stays on the device), and it allows strong performance even without internet. Imagine your smart doorbell finding packages accurately and instantly without needing the cloud.

Self-Supervised Learning in Object Detection

One of the biggest problems in deep learning is the need for huge amounts of hand-labeled data. This takes a lot of time and money to create. Self-supervised learning (SSL) hence aims to lessen this need.

In SSL, models learn to get useful features from unlabeled data by solving “pretext tasks.” For example, a model might be trained to predict missing parts of an image or to recognize different augmentations of the same image. By doing so, it learns a rich way to understand the visual world without human labeling. These learned understandings can be finely adjusted for specific object detection tasks using less labeled data. Consequently, this greatly cuts down on labeling costs, especially in data-heavy fields like self-driving cars.

Beyond Sight: Multi-Modal Vision

The real world isn’t just seen. It’s instead also heard, felt, and understood by its context. Multi-modal object detection, therefore, mixes data from many sources. These include images, text, lidar, radar, and audio sensors. This improves accuracy and, in addition, gives a richer, more situation-aware understanding of objects in complex places.

For example, a self-driving car mixes data from many sensors. It uses camera data, but also lidar for depth and radar for speed and distance in bad weather. This comprehensive approach helps it therefore understand its environment better and more strongly than any single sensor could alone. This blending of information, therefore, helps overcome the limits of individual sensors, consequently making systems more reliable overall.

Blending Realities: Object Detection in Augmented Reality

Augmented Reality (AR), which overlays digital information onto the real world, is a natural partner for object detection. By accurately finding real-world objects, AR applications can then smoothly blend virtual content that interacts truly with the physical world.

Imagine an AR app that can identify a specific piece of furniture in your living room; it could then show you how it would look in another color or with other accessories. Or think of a maintenance worker wearing AR glasses, with these glasses highlighting specific parts on a machine and showing helpful repair instructions. Clearly, object detection is the basic layer that makes these interactive AR experiences possible.

Cross-Domain Adaptation in Visual AI

Currently, a model trained on street scenes (e.g., for self-driving cars) might not work well if it’s used in a totally different area, like a farm field or a factory. Cross-domain adaptability, however, focuses on making models that can effectively use knowledge from one area in another without needing a lot of retraining.

This includes methods that help models apply what they learned better to new places or datasets where object features might be very different. Ultimately, the goal is to create more flexible and adaptable object detection systems that can be quickly used in many different places with little effort.

Mastering Object Detection: Key Takeaways

Object detection, driven by new ideas in deep learning, has deeply changed how machines see and interact with our world. From the exactness of two-stage detectors to the speed of one-stage designs like YOLO, and the new power of Transformers, the field offers many different ways to do things. These technologies are not just ideas; they instead power important uses in smart transport, healthcare, smart retail, and green farming.

You’ve seen how these systems handle complex problems, such as occlusion, changes in size, and the need for real-time performance. You’ve also, furthermore, seen a glimpse of the exciting future. It holds breakthroughs in edge AI, self-supervised learning, and multi-modal blending. These promise even smarter and more adaptable vision systems. As these technologies continue to grow, they will consequently open up countless new abilities and embed machines deeper into how we see the world.

The Future of Object Detection: Your Insights?

The constant change in computer vision and object detection brings both huge chances and important points to think about. What uses do you think will see the biggest changes from these steps forward in the coming years? Share your insights and predictions below!