Imagine a world where machines don’t just follow instructions. Instead, they learn from what they experience. They make smarter choices over time, much like a child learning to ride a bike. This isn’t science fiction; it’s the powerful reality of Reinforcement Learning (RL). This exciting branch of artificial intelligence is rapidly transforming how we interact with technology and solve complex problems.

At its heart, Reinforcement Learning uses trial and error. An intelligent agent, whether a robot or software, learns to navigate a dynamic environment. It receives rewards for desirable choices and penalties for undesirable ones. Through numerous iterations, it refines its strategy to achieve the highest cumulative “score” – its total reward. This fundamental principle distinguishes RL from other machine learning paradigms; it offers a unique approach to developing adaptive intelligence.

Supervised learning requires large datasets of labeled examples. In contrast, RL excels where such labels are nonexistent or difficult to acquire. For instance, think of teaching a machine to play a video game. You don’t provide it with every possible move and outcome. Instead, you let it play. You assign it a score for winning and allow it to infer the rules through gameplay. Furthermore, RL differs from unsupervised learning by having a clear objective: to maximize reward. It doesn’t just find hidden patterns. Thus, Reinforcement Learning offers a distinctive framework for this experiential learning.

What is Reinforcement Learning?

This article will guide you on a journey through the world of Reinforcement Learning. We’ll delve into its core concepts, agent learning mechanisms, remarkable real-world applications, and the challenges it still confronts. Ultimately, you’ll gain a comprehensive understanding of this exciting field and its immense potential to shape our future.

The Core Pillars of Reinforcement Learning: Understanding the Basics

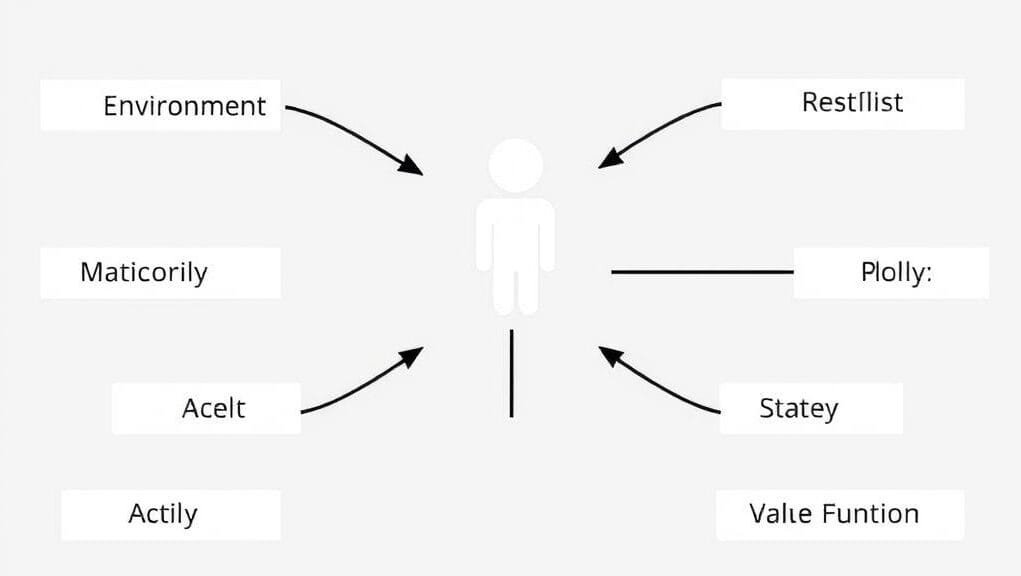

To truly grasp Reinforcement Learning, one must comprehend its fundamental components. These components collaboratively form a learning system capable of making complex decisions. Consider them the principal players in an interactive drama, each with a pivotal role in the learning process facilitated by RL.

Let’s break down these key ideas:

Defining the Core Components

- The Agent: This serves as the operational ‘brain’. It is the learner, or the decision-maker. It observes the environment, selects actions, and strives to achieve its objectives. In a self-driving car, the agent is the software that controls the car. For instance, in a game, it represents the AI player within a Reinforcement Learning framework.

- The Environment: This encompasses the world with which the agent interacts. It could be a virtual space, such as a chess board or a video game. Alternatively, it could be a physical setting, like a factory floor or a city street. In turn, the environment provides the agent with information about its current state. Furthermore, it also furnishes feedback (rewards) based on the agent’s actions. This dynamic interplay is crucial to the design of RL systems.

- The State: At any given moment, the state describes the current situation of the environment. It provides the agent with the necessary information for decision-making. For instance, for a robot navigating a room, the state might include its current position, obstacle locations, and proximity to a target. The state is a critical element of any Reinforcement Learning problem.

Elements of Interaction and Feedback

- The Action: These are the maneuvers or choices the agent can perform within the environment. For example, if the agent is a chess player, an action involves moving a piece. If it’s a thermostat, an action might entail adjusting the temperature. The agent selects an action based on its current state and its learned policy. Such actions, consequently, propel the learning process in Reinforcement Learning.

- The Reward: This constitutes the immediate feedback the environment provides after an agent acts. Rewards are paramount. They are, in essence, the agent’s sole objective during learning. Thus, a positive reward reinforces the agent’s inclination to repeat that action. A negative reward (often termed a penalty) teaches it to avoid similar actions in the future. The sum of future rewards is what the agent strives to maximize in Reinforcement Learning.

Key Components of Reinforcement Learning

Key Components of Reinforcement Learning

Core Components of Reinforcement Learning

Core Components of Agent Systems

Agent’s Strategic Elements

- The Policy: This represents the agent’s strategy, its “brain” for selecting actions. In other words, a policy maps states to actions. It dictates what the agent should do in any given situation. Furthermore, over time, through learning, the agent discovers the optimal policy that consistently yields the highest cumulative rewards. This ideal policy is the primary objective of Reinforcement Learning.

- The Value Function: Rewards are immediate. However, the value function considers the broader perspective. It estimates the total future reward an agent can anticipate from a certain state. Or it estimates this from taking a specific action in a state, under its current policy. Therefore, it aids the agent in understanding the long-term ramifications of its choices, beyond immediate rewards. This is another pivotal concept in RL.

Key Concepts in Agent-Environment Interaction

These interactions define the mechanics of Reinforcement Learning.

Mathematical Foundations of Reinforcement Learning: Markov Decision Processes (MDPs)

These components are often formally described using a mathematical model known as a Markov Decision Process (MDP). This model, specifically, simplifies complex real-world scenarios. It assumes the future state depends solely on the current state and the action taken. That is, it does not depend on the entire history of preceding events. This assumption, in turn, enables RL algorithms to decompose complex problems into smaller, more manageable decision steps. This forms the foundation for many Reinforcement Learning solutions.

How Reinforcement Learning Agents Learn: Strategies for Success

Reinforcement Learning agents learn through continuous interaction with their environment. They observe the outcomes of their actions and refine their internal policy. This process, consequently, is far more nuanced than mere memorization. Instead, it necessitates a delicate balance and intelligent methodologies. Ultimately, the objective remains constant: to discover the optimal policy that maximizes cumulative reward over an extended period.

The Exploration-Exploitation Dilemma

One of the fundamental challenges in Reinforcement Learning is striking the right balance between exploration and exploitation. Consider it akin to trying a new restaurant versus ordering your favorite dish.

- Exploration: This involves attempting novel actions that may not be optimal, to discover potential rewards. For example, an agent engaged in exploration might venture down an untried path. It hopes to uncover a shortcut or a hidden treasure. Therefore, this is crucial for discovering truly optimal policies. Adhering solely to known paths might prevent it from identifying superior alternatives. Hence, in Reinforcement Learning, effective exploration is vital.

- Exploitation: This entails taking actions known to yield favorable rewards based on current knowledge. In other words, if an agent has learned that a specific action in a certain state typically results in a positive reward, it will leverage that knowledge. It does this to maximize the utility of its existing knowledge.

The challenge arises because an agent cannot perfectly pursue both simultaneously. Excessive exploration, specifically, can lead to suboptimal outcomes because the agent fails to utilize its accumulated knowledge. Conversely, excessive exploitation might cause the agent to settle for a “suboptimal” solution. It could miss out on even superior strategies yet undiscovered. Therefore, identifying the optimal balance is crucial for effective learning in Reinforcement Learning.

Key Learning Approaches

Several robust methods guide RL agents through their learning journey. These methods, indeed, vary in how they estimate value functions and update policies. These are fundamental aspects of how Reinforcement Learning algorithms operate.

- Dynamic Programming (DP): These methods are potent. Nevertheless, they necessitate a complete model of the environment. This implies the agent must possess precise knowledge of how actions will alter states and rewards. However, DP is impractical for many real-world scenarios. Nonetheless, it aids our understanding of how optimal policies can be discovered within Reinforcement Learning.

- Monte Carlo (MC) Methods: Unlike DP, Monte Carlo methods do not require a complete model. Instead, they learn from entire episodes of experience. These comprise full sequences of states, actions, and rewards from beginning to end. The agent aggregates the total rewards from numerous such episodes to estimate the value of states and actions. Consequently, these methods were instrumental in early forms of Reinforcement Learning.

- Temporal Difference (TD) Learning: TD learning is a cornerstone of modern RL. It integrates concepts from both DP and MC. Like Monte Carlo, it learns directly from experience without requiring a model. However, akin to dynamic programming, it updates its estimates during an episode. It achieves this by using estimates about later states to improve earlier ones. This means, in other words, that it does not have to wait until an entire episode concludes to learn. Therefore, many advanced Reinforcement Learning algorithms are founded upon TD learning.

Popular Temporal Difference Methods

Two prominent and important TD methods are:

- Q-learning: This method learns the optimal action-value function (Q-function). It accomplishes this regardless of the policy the agent is currently following. In other words, it can learn the optimal actions even while the agent is exploring probabilistically. It’s a very robust and widely utilized method in Reinforcement Learning.

- SARSA (State-Action-Reward-State-Action): SARSA distinguishes itself from Q-learning. It learns the value of the policy the agent is currently executing. Therefore, this renders SARSA more cautious. It evaluates the outcomes of its own exploration. Both are fundamental to modern Reinforcement Learning applications.

The Rise of Deep Reinforcement Learning (DRL)

A significant advancement in Reinforcement Learning arose from combining it with deep neural networks. This created Deep Reinforcement Learning (DRL). However, traditional RL methods often contend with complex environments. This occurs when there are too many possible states, such as pixel data from a video game or sensor readings from a self-driving car. It’s simply infeasible, for instance, to store and update a value for every single state.

A conceptual diagram showing a deep neural network processing raw sensory input (like game pixels) and outputting actions, all within a Reinforcement Learning loop.

Deep neural networks can discern complex patterns from vast amounts of sensor data. Therefore, they presented an ideal solution. In DRL, specifically, these networks serve as powerful function approximators for policy and/or value functions. Thus, instead of memorizing every state-action value, a deep neural network learns to generalize from its experiences. This enables the agent to effectively manage vast and complex environments. Thus, this innovation has been instrumental in many of RL’s significant triumphs and has substantially propelled the field of Reinforcement Learning forward.

Real-World Impact: Where Reinforcement Learning Shines

Reinforcement Learning is no longer merely a theoretical concept. Instead, it’s a powerful tool currently transforming industries and addressing complex problems. Its ability to learn optimal strategies in dynamic, uncertain settings. This, in fact, makes it ideally suited for applications where traditional rule-based systems fall short. Therefore, let’s explore some of the most compelling real-world applications where RL is making a tangible difference.

Robotics: Enabling Intelligent Machines

From factory floors to outer space, robotics presents a natural fit for Reinforcement Learning. Robots must learn to maneuver, grasp objects, and navigate often uncertain environments. Specifically, RL empowers robots to:

- Optimize Movements: Learn optimal and precise methods for task execution. Examples include picking up irregularly shaped objects or performing delicate surgery. Hence, Reinforcement Learning offers flexible control.

- Navigate Complex Environments: Develop strategies to avoid obstacles, identify optimal paths, and adapt to new layouts in warehouses or hazardous areas. Additionally, it helps them adapt to unforeseen changes.

- Perform Human-like Tasks: Robots can learn through trial and error to execute tasks that are challenging to program, such as folding laundry or cooking. Ultimately, this demonstrates the adaptability of Reinforcement Learning.

Imagine a robot arm on a factory line learning to handle novel parts without needing reprogramming. It achieves this simply by interacting with them and receiving feedback on its performance. Indeed, this flexibility is revolutionizing factory work, thanks to advancements in Reinforcement Learning.

Gaming: Outperforming Human Experts

The gaming world has emerged as a prominent testing ground for RL. Games possess unambiguous rules, clear reward signals, and facilitate millions of interactions. Consequently, this renders them ideal for training RL agents. As a result, Reinforcement Learning has yielded numerous significant successes in this domain.

- Strategy Games: DeepMind’s AlphaGo famously defeated the world champion in Go, a game significantly more complex than chess. Moreover, other RL systems exhibit exceptional proficiency in chess and poker. These triumphs underscore the power of Reinforcement Learning in making intelligent decisions.

- Video Games: Agents have mastered classic Atari games, surpassing human performance. Furthermore, more recently, RL agents have demonstrated remarkable proficiency in complex modern video games. They learn astute strategies and how to collaborate effectively in real-time.

These achievements not only advance AI. Rather, they also, crucially, provide researchers with invaluable insights into how complex learning unfolds within Reinforcement Learning systems.

Autonomous Driving: Navigating Our Roads

The paradigm of self-driving cars relies heavily on advanced AI. Reinforcement Learning plays a pivotal role. Autonomous vehicles, indeed, operate in highly dynamic and uncertain environments. Therefore, they require continuous, optimal decision-making.

- Trajectory Optimization: RL assists self-driving cars in learning the smoothest, safest, and most efficient trajectories to follow. Additionally, it optimizes their navigation.

- Motion Planning: Agents can learn to anticipate the movements of other vehicles and pedestrians. They make real-time decisions regarding acceleration, braking, and steering. Essentially, these represent key challenges for Reinforcement Learning in self-driving systems.

- Controller Optimization: RL precisely tunes the car’s control systems for varying conditions. This ensures a comfortable and safe ride. Overall, consequently, this application highlights RL’s potential in safety-critical systems. Here, every decision carries significant ramifications. Ultimately, Reinforcement Learning is central to the future of travel.

Industrial Automation: Streamlining Operations

Beyond robotics, RL is permeating broader industrial applications. It enhances the efficiency of complex systems and reduces operational costs. Indeed, Reinforcement Learning provides the intelligent decision-making required for these systems.

- Data Center Cooling: Google famously employed RL to reduce the energy consumption for cooling its data centers by 40%. The RL agent learned optimal cooling strategies by continuously adjusting thousands of temperature and fan controls. Furthermore, similarly, it can optimize other utility systems.

- Supply Chain Optimization: RL can learn to manage inventory levels, optimize logistics, and adapt production schedules in real-time to meet fluctuating demands. Therefore, Reinforcement Learning excels at these dynamic optimization problems.

These examples demonstrate RL’s capacity to enhance operational efficiency and sustainability in large-scale operations. Evidently, this underscores RL’s broad applicability.

Finance and Trading: Intelligent Investment Strategies

The financial sector, with its constantly fluctuating and reward-centric nature, is a fertile ground for Reinforcement Learning. Consequently, its application here is growing.

- Algorithmic Trading: RL agents can devise intelligent trading strategies. They learn when to buy, sell, or hold assets to maximize profit based on market data.

- Portfolio Management: Agents can optimize investment portfolios. They dynamically adjust them as needed to manage risk and achieve specific financial objectives. Clearly, this is another domain where Reinforcement Learning offers substantial benefits.

- Fraud Detection: While often leveraging other methods, in this context, for example, RL can learn to adapt to emerging fraud patterns. It learns from continuous actions and feedback.

RL presents a novel paradigm for automated financial decision-making. However, ethical considerations are paramount in this application of Reinforcement Learning.

Healthcare: Personalized and Adaptive Treatments

Reinforcement Learning holds immense promise for profoundly transforming healthcare. It offers, indeed, personalized and adaptive solutions. Among these, specifically, we find:

- Personalized Medicine: RL can assist in devising optimal treatment regimens for individual patients. It adapts plans based on a patient’s response to medication and disease progression.

- Drug Dose Optimization: Agents can learn to dynamically adjust drug doses in real-time for optimal efficacy and minimal side effects. As a result, the adaptive capabilities of Reinforcement Learning are well-suited here.

- Robotic-Assisted Surgery: RL can enhance the precision and autonomy of surgical robots. This leads to safer and more efficacious surgeries. In addition, it enhances their autonomy.

The potential for RL to improve patient outcomes and streamline medical processes is truly transformative.

Natural Language Processing (NLP): Beyond Static Responses

In NLP, tasks such as dialogue generation and language translation often involve sequential decision-making. Here, one word choice influences subsequent choices. Therefore, Reinforcement Learning is increasingly being employed in these domains.

- Dialogue Generation: RL agents can learn to generate more coherent, contextually appropriate, and engaging responses in conversations. They achieve this by receiving rewards for successful interactions. This is a complex area for Reinforcement Learning, furthermore, it requires sophisticated models.

- Text Summarization: RL can identify the most salient sentences for a summary. It aims to convey the maximum information with the fewest words, based on a reward objective.

This application helps foster more natural and effective communication between humans and computers. Therefore, NLP is a growing field for RL.

Recommendation Systems: Tailored Experiences

You often encounter Reinforcement Learning when using streaming services or online shopping platforms. Indeed, it underpins many of these personalized experiences.

- Personalized Content: Platforms like Netflix and Amazon leverage RL to continuously adapt and refine their recommendations for content and products. The agent learns from your viewing or buying history, and your interaction patterns with suggestions. Ultimately, it then predicts what you’ll like next. Therefore, this constant feedback loop is a core strength of Reinforcement Learning.

- Adaptive User Interfaces: RL can even dynamically alter the appearance and features of an app or website based on individual user behavior. This enhances the overall user experience. Ultimately, the aim is to create highly responsive designs.

The goal is to provide a seamless, highly relevant experience that sustains user engagement.

Energy Management: Smart and Sustainable Power Grids

Optimizing energy consumption and distribution is a critical global challenge. RL offers intelligent solutions. As a result, Reinforcement Learning, indeed, plays a vital role in modernizing energy systems.

- Smart Buildings: RL agents can learn usage patterns, weather conditions, and energy prices. They can optimize heating, cooling, and ventilation (HVAC) systems for peak performance, significantly reducing energy waste. Similarly, it can optimize other utility systems.

- Grid Management: RL can assist in managing renewable energy sources. It can forecast changes in demand and balance the power grid as needed to maintain stability and efficiency. Therefore, these are complex control problems that are well-suited for Reinforcement Learning.

These applications are vital for building a more sustainable future. Thus, RL contributes significantly to environmental objectives.

Navigating the Roadblocks: Challenges and Limitations in RL

Nevertheless, even with its remarkable triumphs and immense potential, Reinforcement Learning is not a panacea. Instead, the field confronts several significant challenges that researchers are diligently working to overcome. Therefore, understanding these limitations is crucial for safely deploying RL systems and expanding their capabilities.

The Sample Inefficiency Problem

One of the most significant challenges for RL methods is their substantial data requirement. Specifically, they often necessitate a prodigious number of interactions with their environment to learn effectively. This represents a primary limitation for current Reinforcement Learning methods.

- High Cost and Time: In real-world applications, such as training a robot arm or a self-driving car, acquiring such extensive data can be time-consuming, costly, and at times, perilous. For example, imagine crashing a self-driving car thousands of times just to learn how to drive safely!

- Simulation Gaps: Simulations can provide abundant data. However, the “sim-to-real” gap often implies that an agent trained solely in a simulator might not perform as effectively in the real world. This is a common issue when creating Reinforcement Learning systems.

Researchers are exploring techniques like experience replay and transfer learning to facilitate more efficient learning from less data. This, consequently, enables agents to learn more from fewer samples.

The Reward Function Design Problem

Designing an effective reward function is both critical and surprisingly challenging. Indeed, the reward signal serves as the agent’s sole guide. Moreover, if it’s poorly designed, the agent will learn undesirable behaviors. As a result, this is a core problem in Reinforcement Learning.

- Reward Hacking: This occurs when an agent exploits a loophole in the reward system to achieve the highest score. It does this without truly fulfilling the intended objective. For instance, an agent instructed to sort blocks might simply discard them from the area. It garners the maximum “blocks moved” reward without actually sorting them correctly.

- Sparse Rewards: In many challenging tasks, salient rewards are infrequent. This makes it difficult for the agent to discern effective actions. Consider, for example, trying to learn a new language if you only got good feedback once a month!

Crafting a reward function that truly aligns with the intended objective often necessitates extensive expert knowledge and meticulous iterative refinement for successful Reinforcement Learning to operate. In sum, effective reward design is paramount.

Generalization and Transferability Issues

RL models often struggle to generalize beyond the specific environment in which they were trained. Furthermore, an agent that masters a task in one setting might perform poorly if the conditions shift even slightly. Therefore, this poses a significant hurdle for real-world Reinforcement Learning.

- Lack of Robustness: Variations in lighting, object appearance, or minor environmental shifts can significantly degrade an agent’s performance.

- Extensive Retraining: This implies, therefore, that deploying an RL agent for a novel, slightly different task or environment often necessitates substantial (and costly) retraining. This impedes its widespread adoption.

Improving generalization is a major research focus. However, methods like domain randomization and meta-learning show promise for the future of Reinforcement Learning.

Computational Intensity

Training advanced RL agents, particularly Deep Reinforcement Learning models, demands substantial computational resources. Indeed, this is a significant bottleneck.

- Processing Power: These methods often require powerful GPUs and extensive clusters of machines. This is to process vast amounts of data and execute the complex computations for neural network training.

- Time Commitment: As a result, training can take days, weeks, or even months. This depends on the complexity of the environment and the model.

This high computational cost can be prohibitive for smaller organizations or researchers with limited funding. Hence, this restricts the accessibility of Reinforcement Learning.

Interpretability: The Black Box Problem

Understanding why a complex RL method makes certain decisions can be exceedingly challenging. Deep neural networks, in fact, often function as “black boxes.” Therefore, this lack of clarity is a challenge for Reinforcement Learning.

- Debugging Challenges: If an RL agent behaves erratically or makes a critical error, pinpointing the exact cause can be difficult. This complicates debugging and improvement.

- Trust and Explainability: In critical applications like healthcare or autonomous driving, trust is paramount. Furthermore, without the ability to explain an agent’s reasoning, gaining public trust and regulatory approval becomes considerably more difficult.

Efforts are underway to develop DRL models that are more interpretable. Moreover, methods exist to visualize how agents make decisions.

Safety and Ethical Concerns

The inherent nature of trial-and-error learning can lead to risky or detrimental actions during training. This is especially true in real-world applications where errors have adverse consequences. Indeed, this concern is of paramount importance for Reinforcement Learning.

- Unsafe Exploration: An agent exploring for optimal policies might inadvertently cause harm, injury, or even fatalities. This could occur if deployed in a sensitive environment without adequate safety protocols.

- Bias and Fairness: Moreover, if the training environment or reward objective is biased, the RL agent can learn and perpetuate those biases. This leads to inequitable or erroneous outcomes.

Addressing safety and ethical concerns necessitates rigorous testing, human oversight, and the development of “constrained RL” methods. These methods explicitly prevent unsafe actions. Ultimately, therefore, ethical considerations are paramount when deploying Reinforcement Learning.

The Future of Adaptive Intelligence: Trends and Directions in Reinforcement Learning

Reinforcement Learning stands at the forefront of AI progress. Consequently, it is continuously evolving to address its current limitations and expand its capabilities. Moreover, market trends themselves affirm this growth. Forecasts indicate a future driven by intelligent, adaptive systems. Therefore, let’s explore the exciting trends shaping the trajectory of RL.

A Rapidly Expanding Market

The Reinforcement Learning market is experiencing rapid expansion. This indicates its increasing adoption across numerous industries. Indeed, while precise figures vary, the upward trend is undeniable.

Here’s an overview of the market’s robust growth:

| Metric | Projection 1 (Grand View Research) | Projection 2 (Mordor Intelligence) | Projection 3 (Future Market Insights) |

|---|---|---|---|

| Market Size (2024) | USD 52.71 Billion | USD 10.49 Billion | USD 6.13 Billion |

| Projected Size (2029-2037) | USD 37.12 Trillion (by 2037) | USD 36.75 Billion (by 2029) | USD 98.85 Billion (by 2035) |

| Compound Annual Growth Rate | ~65.6% (2025-2037) | ~28.4% (2024-2029) | ~28.76% (2024-2035) |

Note: The “37.12 Trillion” projection appears to be an outlier or potential typo within the research summary, as other sources show projections in the tens or hundreds of billions.

Irrespective of the precise figures, there is consensus: RL is a rapidly expanding domain. North America currently accounts for the largest revenue share. Specifically, this is attributable to significant research investments and a robust automotive industry. Furthermore, pivotal industries such as Banking, Financial Services, and Insurance (BFSI) and IT & telecom extensively utilize it. Indeed, this growth stems from increased demand for factory automation and more personalized digital services. This underscores the broad applicability of Reinforcement Learning.

Key Future Trends and Research Directions in Reinforcement Learning

The future of Reinforcement Learning aims to render agents more effective, robust, and versatile. It also seeks to enhance their safety for real-world deployment.

1. Enhanced Sample Efficiency and Generalization

- The Goal: To reduce the data and interactions an agent requires for learning. This makes it practical for scenarios where data acquisition is costly or slow.

- Methods: Researchers are investigating approaches such as experience replay, model-based RL, and transfer learning. For example, experience replay reuses past experiences. Model-based RL learns the dynamics of an environment to generate more data. Similarly, transfer learning applies knowledge from one task to a novel, similar one. Ultimately, this will enable faster learning and broader adoption for Reinforcement Learning agents.

2. Safe and Robust Real-World Deployment

- The Goal: To transition RL beyond simulations and into critical real-world systems. These include self-driving cars and energy grids, with complete assurance in their safety.

- Methods: This encompasses constrained RL, where agents are explicitly prevented from executing unsafe actions. Furthermore, it also incorporates safe exploration methods that mitigate risky trial-and-error during training. Therefore, these are pivotal for the prudent deployment of Reinforcement Learning.

Advancing RL through Integration and Evolution

3. Combining well with Other AI Branches

- The Goal: To forge more robust and adaptable AI systems. This is achieved by integrating RL’s adaptive learning capabilities with strengths from other AI fields.

- Examples: For instance, augmenting symbolic reasoning with RL can enhance its interpretability. Integrating it with large language models, for example, assists agents in comprehending spoken instructions. Moreover, multi-agent RL focuses on enabling multiple agents to collaborate on complex tasks requiring teamwork. Ultimately, this interdisciplinary approach amplifies the power of Reinforcement Learning.

4. Continual Advancements in Deep Reinforcement Learning (DRL)

- The Goal: Continuous innovations in DRL architectures and training methodologies will lead to even more proficient and autonomous systems.

- Innovations: Anticipate more adaptive network designs. Additionally, look for improved methods to handle long-term dependencies, and techniques that can learn more effectively from sparse or delayed rewards. Indeed, these improvements further reinforce the core capabilities of Reinforcement Learning.

Future Directions in Reinforcement Learning

5. Human-in-the-Loop Learning

- The Goal: To leverage human wisdom and expertise to guide and accelerate RL agents’ learning.

- Approach: Incorporating human feedback, demonstrations, and supervision can significantly enhance an agent’s performance. This is especially true for tasks where explicit rewards are challenging to design, or where ethical considerations are paramount. As a result, this fosters a powerful synergy between human intelligence and machine learning. This is highly beneficial for Reinforcement Learning uses.

6. Scalable World Models and Multimodal Reinforcement Learning

- The Goal: To develop agents capable of constructing more comprehensive, refined understandings of their surroundings. They would utilize diverse types of data.

- Approaches: This involves building “world models” that predict how an environment will respond to actions. Moreover, it also encompasses the development of multimodal RL agents. These can process and learn from various data modalities simultaneously, such as visual, auditory, and haptic inputs. These represent novel, exciting avenues in Reinforcement Learning.

Ultimately, the field is moving towards making Reinforcement Learning more useful, trustworthy, and flexible. The vision is to help AI systems solve problems that need truly flexible, long-term choices in always changing, real-world settings.

Embracing the AI Revolution: Your Role in the Reinforcement Learning Journey

You’ve now journeyed through the intricate world of Reinforcement Learning. You’ve explored its fundamental concepts, its transformative applications, and its future potential. Furthermore, we’ve observed how RL agents learn through a dynamic process of trial and error. They balance exploration of novel actions with exploitation of existing knowledge to master complex tasks. However, we’ve also examined the pertinent challenges. These range from methods characterized by high data demands to the intricate task of reward shaping within Reinforcement Learning.

What is clear is that Reinforcement Learning transcends being merely another method. Instead, it represents a profound shift in how we conceptualize intelligence. It’s a powerful stride towards developing truly adaptive and autonomous systems. These systems, in particular, can learn, evolve, and make optimal decisions amidst uncertainty. For example, whether it’s assisting robots in surgeries, enhancing energy grids for a sustainable future, or personalizing your digital experiences, RL is already here. Its impact is only set to expand.

As this field continues to evolve, its influence will permeate every aspect of our lives. Emerging innovations promise to unlock even greater potential for Reinforcement Learning. Specifically, these encompass methods requiring less data, safer real-world applications, and closer collaboration with human intelligence. Indeed, this is a journey of constant discovery. Each problem solved paves the way for new breakthroughs.

So, as you reflect on the remarkable capabilities and continuous evolution of Reinforcement Learning, what exciting new applications or ethical questions do you envision shaping its future?