Imagine a world where machines don’t just process data, but truly understand what they’re looking at. They grasp what objects are, where they’re found, and how they interact with their environment. This isn’t science fiction; it’s the powerful reality of computer vision. At its heart lies a core ability: object detection. This incredible technology helps computers interpret visual information, much like our own brains do. It’s changing how we live, work, and interact with the digital world.

What is Object Detection? A Machine’s Eye for Detail

Computer vision, a vital field within artificial intelligence, enables machines to “see” and interpret visual information. Within this exciting field, object detection is a key task. For instance, a machine needs more than simply identifying that an image contains a cat. It needs to identify where that cat is, perhaps showing its exact spot. Think of it as teaching a computer to play “I Spy” with great accuracy and speed.

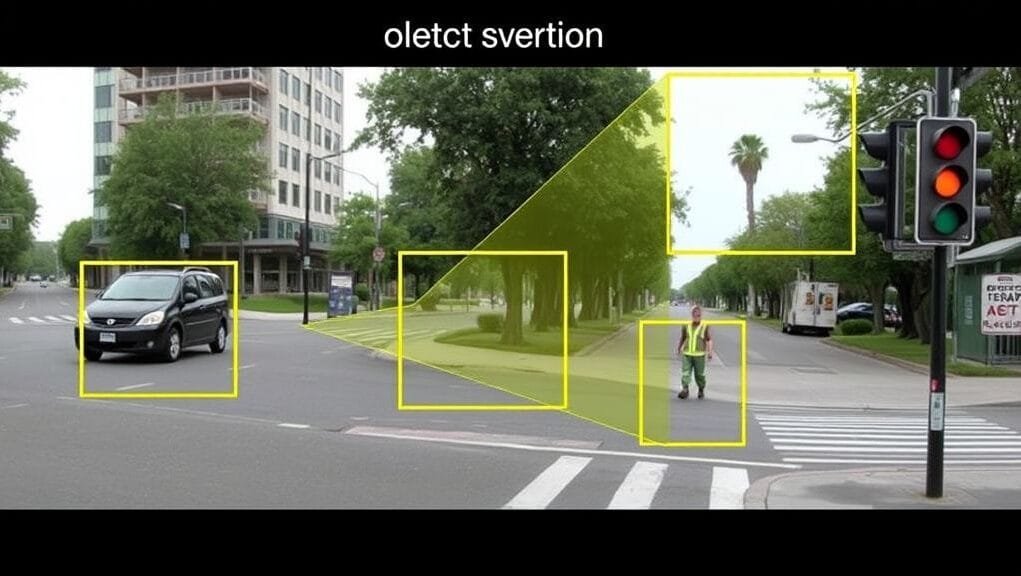

Object detection involves two vital parts working together. First, there’s object classification, which is about identifying what an object is—for example, is it a car, a pedestrian, or a traffic light? Second, and equally important, is object localization. This part finds the object’s precise spot within an image or video frame. Often, a tight “bounding box” is drawn around the identified object to show this. Together, these two steps enable machines to gain a full understanding of visual scenes.

The Evolution: From Manual Features to Deep Learning’s Revolution

The journey of object detection is a story of remarkable transformations, thanks to significant breakthroughs in machine learning. For many years, researchers relied on methods that required extensive manual feature engineering and clever design. However, [deep learning](https://www.nvidia.com/en-us/glossary/data-science/deep-learning/)’s arrival completely transformed the field. It achieved things we never thought possible.

Traditional Approaches: The Dawn of Detection

Before [deep learning](https://www.nvidia.com/en-us/glossary/data-science/deep-learning/) became popular, object detection methods were quite different. Early techniques, for instance, often involved creating specific “features” that a computer could identify. You can think of it like a detective searching for exact clues. For example, the Viola-Jones framework, notable for its role in real-time face detection, used basic pixel shapes to quickly find faces. Another method, Histogram of Oriented Gradients (HOG), focused on describing object shapes by looking at how light and edges formed patterns.

These traditional approaches were good for their era, but they had drawbacks. Specifically, human experts needed to manually create the features that the algorithms would then use. This made them inflexible. If you wanted to detect a new type of object, for instance, you often had to start over to design new features. Classifiers like Support Vector Machines (SVMs) would then use these created features to determine the presence of an object. Although this was important groundwork, these methods paved the way for something much stronger.

The Deep Learning Revolution: CNNs Take Center Stage

The profound shift came with [deep learning](https://www.nvidia.com/en-us/glossary/data-science/deep-learning/), particularly with the emergence of [Convolutional Neural Networks (CNNs)](https://www.ibm.com/topics/convolutional-neural-networks). Imagine a system that doesn’t need you to tell it what features to look for. Instead, it learns them on its own, directly from vast amounts of data. This ability is the fundamental strength of CNNs. They can, for example, automatically find multiple layers of hierarchical features from images. This enables them to progress from simple edges and textures to more detailed parts of an object, like eyes or wheels.

This shift meant that models became autonomous feature extractors, rather than engineers manually crafting features for countless hours. A significant improvement in both accuracy and efficiency followed. Indeed, [deep learning](https://www.nvidia.com/en-us/glossary/data-science/deep-learning/) models, given enough data, can spot small patterns and connections that features made by humans might miss. Consequently, CNNs achieved very high performance, making them the cornerstone of modern object detection.

An illustration showing the basic layers of a Convolutional Neural Network (CNN) processing an image to extract features.

How Object Detection Works: The Core Principles

At its heart, object detection is a sophisticated integration of various artificial intelligence methods. It takes the idea of simply recognizing objects and extends it to identifying their precise presence and location within a visual scene. This complex process thus allows machines to interpret visual scenes with human-like comprehension.

Beyond Simple Classification: Localization and Bounding Boxes

Many people know about image classification. Here, an AI finds the primary subject of an entire image, labeling it, for example, as “dog.” Object detection, however, does much more. It doesn’t just say “there’s a dog somewhere in this picture.” Instead, it shows exactly where the dog is. This is what object localization does. It’s like drawing a precise boundary around each item of interest in the image.

A bounding box usually shows this localization. Imagine a rectangle drawn perfectly around a car, a pedestrian, or a traffic sign. Such bounding boxes thus provide crucial spatial information. This sets object detection apart from simpler classification tasks. It tells you not only what is present, but also where each specific object is. Furthermore, object detection models learn to associate visual features like size, shape, and color with specific objects. They do this based on patterns found in labeled training data. Thus, they infer these characteristics rather than being directly told to spot them.

Learning from Data: Features, Patterns, and Annotations

The power of modern object detection models comes from their learning ability. They don’t arrive pre-loaded with knowledge of every possible object. Instead, they are trained on extensive datasets of images and videos. Crucially, humans meticulously annotate these datasets. This means, for example, that people have carefully reviewed numerous images. They draw bounding boxes around every item of interest and label what each object is. To teach a model to detect bicycles, for example, you’d show it thousands of pictures of bicycles, each with a box around it labeled “bicycle.”

During training, the [deep learning](https://www.nvidia.com/en-us/glossary/data-science/deep-learning/) model, usually a CNN, looks at these labeled images. It learns to find features—unique visual traits that help identify different objects. It builds an understanding of patterns, like the common shape of a car, the texture of a person’s clothing, or the color of a traffic light. Furthermore, the model then learns to associate these features with specific object types. It also learns to predict the precise location for their bounding boxes. Ultimately, this data-driven approach is what makes these models so flexible and robust. The more varied and accurate the training data, the better the model performs.

Dominant Deep Learning Algorithms: Two-Stage vs. One-Stage

The [deep learning](https://www.nvidia.com/en-us/glossary/data-science/deep-learning/) field for object detection is dominated by two main types of algorithms: two-stage detectors and one-stage detectors. Each approach, however, offers distinct advantages, especially when considering the trade-off between accuracy and speed. Understanding these differences is key to appreciating the versatility of modern object detection systems.

Two-Stage Detectors: Precision Through Refinement (R-CNN Family)

Imagine a detective who first finds possible areas of interest, then looks closely at each one. This describes the two-stage detection approach. These models aim for high accuracy by performing object detection sequentially. In the first stage, they propose potential regions in an image where an object might be present. These are called “region proposals.” In the second stage, these proposed regions are meticulously analyzed, classified, and refined. This helps to predict precise bounding boxes.

The R-CNN series is the most prominent family of two-stage detectors. This started with R-CNN (Region-based Convolutional Neural Network). Though innovative, it was also slow. Later, it evolved into Fast R-CNN and then Faster R-CNN. These versions significantly improved processing speed by refining how regions were processed. Faster R-CNN remains a well-regarded and robust framework today, known for its superior performance and accuracy in many tests. Its ability to create rapid region proposals, followed by rigorous classification, leads to highly accurate object detection. Another important member, Mask R-CNN, further extended this capability. It performs not just object detection, but also instance segmentation, drawing outlines around objects down to each pixel. Although generally slower than one-stage detectors, their higher accuracy, however, makes them ideal for tasks demanding high precision.

One-Stage Detectors: Speed for Real-Time Performance (YOLO, SSD)

Now, imagine a very fast observer who can detect and localize objects in a single glance. This is the core principle behind one-stage detectors. These algorithms are designed for speed. They do both object classification and bounding box prediction in a single network pass. This simple approach makes them very effective, especially for applications requiring instantaneous processing, such as video analysis.

In this group are algorithms like You Only Look Once (YOLO) and Single Shot MultiBox Detector (SSD). When YOLO was first introduced in 2016, for instance, it was revolutionary for its speed. It handled images much faster than its two-stage counterparts. Since then, YOLO has seen continuous advancements with new versions (YOLOv4, YOLOv5, YOLOv7, YOLOv8, YOLOv9). Each version improves on the previous one to deliver even better performance and efficiency. Indeed, these advancements have secured YOLO’s spot as a top choice for real-time applications, striking an impressive balance between speed and accuracy. These models achieve remarkable speed, though sometimes with a slight trade-off in accuracy, especially when compared to the most accurate two-stage models. Still, their capabilities are sufficient for many real-world uses.

Measuring Success: Benchmarking Object Detection Models

How do we compare the performance of these different models? It’s key to have standardized metrics for evaluation. This is where benchmark datasets become important. These are large, well-labeled sets of images and videos, serving as shared testing areas for all object detection algorithms. By testing models with this data, researchers can fairly compare their accuracy, speed, and overall performance.

Some of the most well-known benchmark datasets include Microsoft COCO (Common Objects in Context) and ImageNet. Microsoft COCO, for example, is highly regarded for object detection because it features a wide variety of objects in complex scenes, often with multiple objects, occluded objects, and varying sizes. ImageNet, meanwhile, is primarily known for image classification; however, it also has components relevant for object detection. These benchmarks thus allow the community to track progress, helping identify top-performing models and ensuring that advancements are rigorously evaluated against real-world problems.

Real-World Impact: Diverse Applications of Object Detection

Object detection is no longer just a research topic. Instead, it’s a core technology driving innovation in nearly every industry. Its ability to let machines “see” and understand their surroundings has unlocked possibilities for applications that were once only in sci-fi stories. Thus, you’ll find object detection seamlessly integrated into many systems, making our lives safer, more efficient, and more connected.

Safer Roads: Autonomous Vehicles

Perhaps one of the most critical uses of object detection is in autonomous vehicles. For a self-driving car to drive safely, it must accurately perceive its environment in real-time. This means, for instance, correctly spotting people walking across the street. It also means identifying other cars on the road, understanding traffic lights, recognizing crosswalks, and detecting unexpected obstacles like debris. Object detection provides vital visual information, helping vehicles make informed decisions, prevent crashes, and keep passengers and others on the road safe. Indeed, true autonomous driving would be impossible without accurate object detection.

Enhanced Security: Surveillance and Monitoring

In the area of security and surveillance, object detection offers a significant enhancement. It moves beyond simple recording to intelligent, proactive monitoring. This technology allows real-time tracking of people and objects within video footage. Such tracking can be very useful for security staff. Moreover, it can be trained to detect anomalous behaviors, such as someone loitering in restricted areas. It can also identify items linked to crime, like unattended luggage or even weapons. By automating the identification of anomalies, security systems can become significantly more proactive and efficient, alerting humans only when truly needed.

Life-Saving Insights: Medical Imaging

The healthcare industry benefits greatly from object detection, especially in medical imaging. Radiologists, for example, can use these AI tools to help with the demanding task of meticulous examination. Object detection models, therefore, can be trained to assist in identifying subtle tumors in X-rays. They can also locate broken bones in scans or highlight other abnormalities in MRI and CT scans. While these systems don’t replace human expertise, they act, instead, as intelligent assistants, pointing out spots that might otherwise be missed. This improves diagnosis accuracy and speed, ultimately leading to better patient care and outcomes.

Smarter Commerce: Retail Innovations

The retail industry is using object detection to create more efficient, customer-centric environments. For example, it’s used for stock control, automatically detecting when shelves require restocking or tracking product movement. People counting and customer analysis, moreover, offer insights into customer traffic flow, popular store areas, and queue lengths. This helps optimize store layouts and staffing levels. Furthermore, object detection plays a role in fraud prevention by detecting suspicious activities at self-checkout stations or watching for shoplifters. These applications help retailers streamline operations, enhance the shopping experience, and increase profits.

Optimized Production: Manufacturing Quality Control

In manufacturing, accuracy and speed are key. Object detection is invaluable for quality control. It automatically checks items on assembly lines to identify defects such as scratches, misalignments, or missing parts. This, in turn, reduces the need for manual inspection, which can be slow and prone to human error. By guiding robots, object detection also helps automate factory work, ensuring that tasks like picking and placing parts are done with high precision. As a result, this leads to better product quality, reduced waste, and increased throughput in factories.

Game-Changing Data: Sports Analytics

Sports analytics has been profoundly transformed by object detection. During games, for instance, AI systems can track objects like the ball in football, basketball, or tennis, providing precise data on its path and speed. It can also study player movements, identifying patterns, team formations, and individual player statistics. This detailed data, moreover, helps coaches make more strategic game decisions. It helps commentators offer clearer explanations. Furthermore, it allows fans to enjoy sports in a new way, understanding the complex workings of the game like never before.

Immersive Experiences: AR/VR Integration

The efficacy of Augmented Reality (AR) and Virtual Reality (VR) relies heavily on accurate object detection and tracking. To create truly immersive and compelling AR/VR experiences, therefore, the system must comprehend its physical environment. Object detection allows AR apps to accurately overlay virtual objects onto real surfaces, like placing a virtual sofa in your living room. In VR, furthermore, it helps track controllers and physical boundaries, stopping users from hitting real things. Overall, this technology is key for seamlessly blending digital content with our physical environment, making virtual worlds feel highly realistic.

Navigating the Hurdles: Key Challenges in Object Detection

While object detection has made remarkable advancements, it’s far from a perfected science. The real world, however, is messy, unpredictable, and full of visual challenges. These continue to challenge even the best AI models. Researchers and engineers are, therefore, continuously working to address these issues, extending the limits of what these systems can achieve.

The Classification-Localization Conundrum

One of the primary challenges in object detection is the two-fold goal of classifying and locating. It’s not just about knowing what an object is, but its precise location simultaneously. This requires the model to perform two separate but linked tasks in tandem. If the localization is slightly inaccurate, the classification can also be compromised. This is because the bounding box might include irrelevant background information or omit crucial object features. Achieving high accuracy in both aspects remains a significant challenge. Sophisticated multi-task loss functions during training often address this. These functions instruct the model on how to balance the importance of correctly identifying both the object’s type and its location, striking a balance between the two.

Balancing Speed and Accuracy for Real-Time Needs

For many real-world uses, especially those with video, real-time performance is a must-have. Think of self-driving cars that need to react in milliseconds. However, there’s often a significant trade-off between speed and accuracy. Highly accurate models tend to demand substantial computational resources and thus are slower. Faster models, on the other hand, sometimes sacrifice some accuracy. One-stage detectors like YOLO, for example, focus on speed, making them well-suited for real-time applications. However, they might not always reach the peak accuracy of slower, two-stage systems. The constant challenge, therefore, is to create new designs and optimization techniques that can close this gap, delivering both high speed and robust accuracy simultaneously.

Handling Scale, Shape, and Perspective Variations

Objects in the real world don’t always appear perfectly arranged. They can be very small or very large, appearing at different distances and from various perspectives. Multiple spatial scales and aspect ratios present a significant challenge. For example, a small object, like a distant pedestrian, might have only a few pixels, making it hard to detect. Conversely, an object viewed head-on will look different from one seen from the side or at an angle. Furthermore, objects can deform—a person’s posture changes, or a car might be slightly deformed. Models handle these diverse variations by using various methods. These include multiple feature maps and anchor boxes. For instance, multiple feature maps allow different layers of the neural network to detect objects at various scales, while anchor boxes are predefined box shapes and sizes.

The Data Dilemma: Scarcity and Imbalance

Lack of data is a pervasive issue in [deep learning](https://www.nvidia.com/en-us/glossary/data-science/deep-learning/). Specifically, they require large, high-quality, and well-labeled datasets for training. Creating these datasets, therefore, is a labor-intensive and expensive process, often involving numerous human annotators. Even when data is plentiful, there’s often an issue of class imbalance. This occurs, for instance, when some object types are much more common than others. For example, in a traffic scene dataset, there might be thousands of cars but only a few bicycles or certain rare road signs. This unevenness, consequently, can lead to biased models that perform exceptionally well on common objects but poorly on rare ones, simply due to insufficient examples of the rarer categories.

Overcoming Obstructions: Occlusion and Poor Visibility

The real world is seldom perfectly clear. Occlusion, where objects are partly or fully blocked by other objects, is a major challenge for detectors. If a person is largely hidden behind a parked car, for example, the model might find it hard to spot them. Similarly, poor visibility also significantly hinders detection. This can be due to adverse weather conditions like fog, heavy rain, or snow. Even challenging lighting conditions, such as deep shadows or dim light, pose difficulties. These factors, therefore, change pixel values and obscure crucial features. They also make it difficult for the model to “see” and accurately perceive objects. Developing models that are robust against these real-world imperfections is, thus, an active area of research.

Navigating Complex Environments: Viewpoint, Deformation, and Clutter

Beyond simple occlusions, objects face numerous complex environmental factors. Viewpoint variation, for instance, refers to an object being viewed from diverse angles, which can significantly alter its appearance. For example, a chair looks very different from the front compared to its side or from above. Deformation is another hurdle, especially for flexible objects like humans or animals, as their body shapes can change considerably depending on their actions. Lastly, cluttered backgrounds pose a significant obstacle. When an object blends into a busy or textured background, distinguishing it from its surroundings becomes significantly more challenging, for instance. Imagine trying to spot a hidden object; indeed, this is the kind of challenge detectors face in highly complex scenes.

Gazing into the Future: Emerging Trends in Object Detection

The field of computer vision, and specifically object detection, is constantly evolving. It’s a dynamic domain of continuous innovation. Researchers and engineers, therefore, are constantly pushing the boundaries of what’s possible. The future promises even more sophisticated, efficient, and equitable object detection systems that will further transform our world.

Advancing Neural Architectures

The search for better results never ends. One of the most important trends is the ongoing development of advanced [deep learning](https://www.nvidia.com/en-us/glossary/data-science/deep-learning/) architectures. This involves designing novel types of neural networks that are more efficient, more accurate, or optimized for specific tasks. Researchers are exploring new ways to connect layers, process information, and learn features. Their aim is to create models that can deliver superior performance with reduced computational resources. These architectural innovations, therefore, are vital for unlocking the next generation of object detection abilities.

Processing Power on the Edge

In the past, demanding AI tasks often relied on powerful cloud computing. However, a significant trend is the move to edge computing. This simply means processing data directly on the device itself, instead of sending it to remote servers. For object detection, this is a game-changer. For example, imagine a self-flying drone performing real-time surveillance without needing a constant internet connection, or a smart factory sensor rapidly identifying defects. Edge computing thus allows real-time object detection in places where delays or network connectivity is unreliable, which is key for uses like self-driving cars, factory automation, and portable AR/VR devices.

Smarter Data Utilization: Self-Supervised and Few-Shot Learning

As we discussed, labeled data is extremely expensive. Future trends, however, are directly addressing this with methods like self-supervised learning and few-shot learning. Self-supervised learning, for instance, allows models to learn from data without labels by generating “pretext tasks” (e.g., guessing missing parts of an image), effectively creating their own learning signals. Few-shot learning, furthermore, tries to teach models to recognize novel objects with just a few examples, which greatly lowers the need for large labeled datasets. These approaches, therefore, are vital for making model training more scalable and efficient, especially for detecting new or rare objects where extensive labeled data is unavailable.

Beyond 2D: The Rise of 3D Object Detection

Our world is 3D. For many applications, therefore, understanding depth and how things relate in space is vital. That’s why 3D object detection is becoming increasingly crucial. Instead of just drawing 2D bounding boxes on an image, 3D object detection localizes objects, finding their positions, orientations, and dimensions in 3D space. This is key for uses like self-driving, robotics, and advanced AR/VR. For example, it helps self-driving cars understand the distance and direction of other vehicles, enables robots to grab and move objects, and allows for highly accurate spatial mapping in AR/VR. Overall, it provides a much deeper understanding of complex scenes than traditional 2D methods.

Holistic Understanding: Multi-modal Detection

The human brain doesn’t just use sight; it integrates information from multiple senses. Similarly, multi-modal detection aims to enhance accuracy and holistic understanding by fusing diverse data types. This could involve, for instance, blending visual information from cameras with data from LiDAR (light sensing) sensors, radar, or even text. By integrating various data streams, consequently, models can gain a richer and more comprehensive understanding of objects and their environment. This is especially important in challenging or ambiguous conditions, where one type of sensor data alone wouldn’t be sufficient.

Detecting the Tiniest Details: Small Object Detection

One of the persistent challenges, as noted, is detecting small objects. These objects, often taking up only a few pixels, contain limited visual information, which naturally makes them hard for models to spot. New techniques, furthermore, are being intensively developed to improve small object detection. This includes complex feature pyramid networks, context aggregation methods, and specialized training strategies. These methods, therefore, help models discern subtle visual cues and prevent missing small details in large images. Progress in this area is key for uses like drone surveillance or detecting distant hazards.

Leveraging Generative AI for Data Augmentation

Lack of data is a recurrent challenge in [deep learning](https://www.nvidia.com/en-us/glossary/data-science/deep-learning/). Enter Generative AI. Models like Generative Adversarial Networks (GANs), for example, are increasingly utilized to generate synthetic data. This means that AI can create realistic images and bounding boxes for objects that might be rare or difficult to obtain in real-world scenarios. This is particularly beneficial in sensitive areas like healthcare, for instance, where patient data is scarce, and for mimicking rare but important situations in self-driving. By augmenting real datasets with AI-generated data, consequently, researchers can enhance model robustness and generalization capabilities, all without the prohibitive cost of real-world data collection.

Building Responsible AI: Ethics and Bias Mitigation

As object detection becomes more pervasive, therefore, so too does the need for responsible development. Research in ethical AI and bias mitigation is crucial. Object detection models, like any AI, can inherit biases from their training data. For example, if a model is primarily trained on data reflecting a specific demographic, it might perform poorly or exhibit bias against others. Research in this area, consequently, focuses on identifying and rectifying these biases, ensuring that object detection technologies are deployed equitably, fairly, and conscientiously across all populations and applications. This crucial endeavor, ultimately, ensures that these powerful tools benefit everyone without accidentally causing harm.

The Vision Ahead: A Future Shaped by Smart Machines

Object detection stands as a cornerstone of modern computer vision, constantly evolving and expanding its capabilities. From its humble beginnings with handcrafted features to the sophisticated [deep learning](https://www.nvidia.com/en-us/glossary/data-science/deep-learning/) architectures of today, the field has, indeed, progressed at an astonishing pace. Significant challenges persist, including addressing occluded objects and balancing speed with accuracy. Nevertheless, current research and work continue to push the boundaries of what machines can “see” and understand.

The effect of this technology is profound. It affects everything from the safety of our roads to the efficiency of our factories and the quality of our healthcare. As we develop advanced architectures, utilize edge computing, and address ethical considerations, object detection will become more robust, intelligent, and integrated into our daily lives.

What do you believe will be the most transformative application of object detection in the next five years, and why?