The world of machine learning is dynamic, engaging, and ever-evolving. As you embark on or advance in this field, you’ll quickly recognize Python’s dominant role. Indeed, it is unequivocally the premier programming language for [AI development]. Within Python’s extensive ecosystem, two major players dominate the deep learning framework landscape: PyTorch and TensorFlow. These powerful open-source tools, born from Meta AI and Google, have transformed how we build, train, and deploy intelligent models. Understanding the intricacies of PyTorch vs TensorFlow is crucial for anyone engaged in modern AI development.

Choosing the right framework can feel like a daunting decision, yet it is a critically important one. Indeed, it impacts your workflow, the scalability of your projects, and even your career trajectory. Furthermore, both PyTorch and TensorFlow offer a wealth of powerful features for various machine learning tasks, particularly deep learning. However, they approach model development with distinct philosophies. Recognizing these subtle differences is essential for both novice and seasoned AI practitioners. This is especially pertinent when navigating the ongoing discourse surrounding PyTorch vs TensorFlow. This article will guide you through their main differences, strengths, uses, and performance. Ultimately, it will help you identify the optimal tool for your next ambitious project within the PyTorch vs TensorFlow landscape.

The Dawn of Deep Learning: Why Python Reigns Supreme

Python’s inherent simplicity, its extensive libraries, and its vibrant community have solidified its position as the premier choice for data science and machine learning. For instance, its readable syntax allows developers to concentrate more on algorithms and less on rigid coding conventions. In essence, from preparing data with libraries like Pandas and NumPy to constructing advanced models, Python offers a seamless and efficient experience. Moreover, with the ascent of deep learning—this powerful subset of machine learning, inspired by the human brain—its rise has further cemented Python’s lead.

Deep learning models frequently feature multiple layers of artificial neural networks. Consequently, they necessitate substantial computing power and sophisticated tools to manage their intricate designs.

Frameworks: The Engines of Deep Learning

This is precisely where frameworks like PyTorch and TensorFlow become indispensable. Essentially, they function as powerful engines beneath Python’s surface. In other words, they abstract away the complex details of underlying computations, memory management, and GPU acceleration. Ultimately, these frameworks transform the arduous task of building a neural network from scratch into a far more approachable and intuitive process. This simplifies the decision between options like PyTorch vs TensorFlow.

These frameworks provide ready-to-use components for common neural network layers, optimization algorithms, and utility functions. Naturally, this significantly accelerates development. Furthermore, they facilitate the efficient utilization of specialized hardware, such as Graphics Processing Units (GPUs). These GPUs are critical for training large deep learning models. Indeed, without the innovations these frameworks offer, the rapid progress witnessed in areas like computer vision, natural language processing, and generative AI would be nearly impossible. Therefore, understanding what PyTorch vs TensorFlow offer is not merely about selecting a tool. It’s about embracing the future of artificial intelligence.

Unpacking the Core Differences: PyTorch vs TensorFlow Approaches

At the core of any deep learning framework lies its approach to handling computations. For example, this is often conceptualized through the notion of a “computational graph.” Envision your model’s operations—such as mathematical steps, activations, or loss calculations—as nodes within a graph. Crucially, these nodes are interconnected by arrows that dictate the flow of data. Therefore, the manner in which this graph is constructed and executed constitutes a primary distinction when comparing PyTorch vs TensorFlow.

Understanding Computational Graphs

Historically, TensorFlow famously employed a “static” computational graph. Specifically, this entailed first defining the entire structure of your neural network graph. Subsequently, you compiled it for execution. Only after these initial steps could data be fed into it. This “define-and-run” paradigm offered certain benefits for optimization and deployment. However, it frequently rendered debugging a challenging endeavor. PyTorch, conversely, strongly championed a “dynamic” or “define-by-run” graph. Here, the graph is constructed dynamically as your code executes. This afforded significantly greater flexibility and immediate feedback. Indeed, many developers found this approach to be far more akin to conventional Python programming.

Nonetheless, the landscape has evolved significantly. For example, TensorFlow 2.x introduced “eager execution.” This largely bridged the conceptual gap between the two frameworks. Eager execution enables TensorFlow to operate in a more direct, PyTorch-like manner. Here, operations execute immediately rather than being added to a pre-defined graph beforehand. While this convergence brings both frameworks closer in their operational paradigms, their foundational philosophies still influence their respective tooling and optimal use cases. This is evident in the ongoing PyTorch vs TensorFlow discussion.

A split image illustrating PyTorch vs TensorFlow by showing PyTorch’s dynamic graph (on-the-fly construction) contrasted with TensorFlow’s static graph (pre-defined structure).

PyTorch: The Researcher’s Best Friend

PyTorch has garnered immense popularity among researchers and within academic institutions, and for good reason. Primarily, its design prioritizes flexibility and ease of use. Indeed, this makes it an excellent choice for experimentation and rapid iteration. Consequently, when considering deep learning frameworks for research, PyTorch frequently emerges as a preferred choice over alternatives like TensorFlow.

Ease of Use and Pythonic Nature

Many developers commend PyTorch for its intuitive, Pythonic design. For instance, if you are proficient in Python, learning PyTorch feels remarkably natural. This is largely attributed to its seamless integration with Python’s existing debugging tools and conventional coding paradigms. Furthermore, its operations are straightforward, often mirroring standard NumPy array manipulations. This significantly shortens the learning curve. Ultimately, this inherent ease of use translates to less time grappling with framework intricacies. It means more time dedicated to optimizing model performance. This represents a distinct advantage in the PyTorch vs TensorFlow comparison for new users.

Rapid Prototyping and Research

Many cite the dynamic computational graph as PyTorch’s most acclaimed feature for rapid development. Therefore, researchers frequently need to experiment with novel model architectures. They may also need to modify models during runtime or handle inputs of varying dimensions. Crucially, PyTorch’s define-by-run approach accommodates such iterative changes with remarkable facility. Debugging is also considerably simpler. For example, you can leverage standard Python debugging tools to step through your code and inspect values at each stage. This mirrors the process with any other Python script. Thus, this unparalleled flexibility accelerates ideation, experimentation, and refinement. This is a pivotal aspect of academic research. Here, PyTorch frequently prevails in the PyTorch vs TensorFlow debate.

Why PyTorch Excels in Research and Development

Efficient GPU utilization is paramount for deep learning. Indeed, in this regard, PyTorch performs exceptionally well. It integrates seamlessly with NVIDIA’s CUDA platform. This allows for offloading computations to the GPU with minimal code modifications. Moreover, this robust GPU acceleration is essential for timely training of complex deep learning models. Essentially, the framework manages the intricate underlying details. This allows you to focus on scaling your models without delving into intricate hardware programming. This is a distinct benefit of PyTorch vs TensorFlow for rapid scalability.

PyTorch’s Thriving Ecosystem

Though PyTorch is a newer entrant compared to TensorFlow, it has cultivated a remarkably active and rapidly growing community. Indeed, it enjoys immense popularity among academic faculty and researchers. This vibrant community, for instance, contributes to a rich ecosystem of specialized libraries and extensions. For instance, `TorchVision` provides common datasets, model architectures, and image transformations for computer vision tasks. Similarly, `TorchText` offers powerful tools for natural language processing. The collaborative spirit of this community ensures continuous innovation and ample support for new users. Moreover, active discussion forums and shared code repositories mean you are rarely isolated when encountering a challenge. This is a crucial differentiator when comparing deep learning frameworks like PyTorch vs TensorFlow.

TensorFlow: The Production Powerhouse

TensorFlow, backed by Google, has long been the predominant framework for large-scale industrial applications. Consequently, its comprehensive suite of tools and robust focus on production readiness make it an excellent choice for enterprise-level machine learning projects. Therefore, when considering deep learning frameworks for extensive deployments, TensorFlow frequently holds a distinct advantage over other PyTorch vs TensorFlow options.

Scalability and Robustness for Enterprise

TensorFlow is renowned for its scalability and robust architecture. In particular, it is engineered to handle massive datasets. Moreover, it facilitates model deployment across diverse real-world environments. Furthermore, its design supports distributed training across various devices: multiple CPUs, GPUs, and even Google’s custom Tensor Processing Units (TPUs). As a result, this capability makes it highly effective for training exceptionally large models. For companies aiming to integrate machine learning into their core products, TensorFlow provides the stability and tooling necessary for reliable, large-scale operations. Ultimately, this robust feature set is a primary differentiator in the PyTorch vs TensorFlow discussion for businesses.

TensorFlow’s Enterprise Suitability

Comprehensive Ecosystem and Tools

Perhaps TensorFlow’s most compelling feature is its well-developed and expansive ecosystem of tools. This ecosystem extends far beyond merely model building. For example, it provides a suite of tools designed to support the entire machine learning lifecycle. This is often referred to as MLOps. Specifically, `TensorFlow Serving` enables rapid, flexible deployment of machine learning models in production environments. Similarly, `TensorFlow Lite` optimizes models for mobile and embedded devices. This facilitates on-device AI. Additionally, `TensorFlow.js` brings machine learning directly into web browsers, creating new opportunities for interactive AI applications.

Finally, `TensorBoard` offers powerful visualization capabilities. You can monitor model training, debug issues, and evaluate performance. This comprehensive approach positions TensorFlow as a complete solution for large enterprises. It is often preferred in the PyTorch vs TensorFlow choice for widespread deployment.

Key Advantages of TensorFlow

Performance Optimization

While PyTorch’s eager execution offers intuitive understanding, TensorFlow leverages the power of fixed graphs. This is enabled through `tf.function` in TF 2.x, which allows for graph optimizations before running. Consequently, this can lead to significant speed enhancements, especially for large, repetitive computations. Additionally, the framework incorporates `XLA` (Accelerated Linear Algebra) for expedited mathematical operations. Furthermore, TensorFlow provides built-in support for Google’s custom Tensor Processing Units (TPUs). These are specialized chips designed to accelerate AI workloads. This combined array of optimization strategies contributes to TensorFlow’s reputation for high performance in large-scale applications. This often confers an advantage in PyTorch vs TensorFlow comparisons focused on pure speed.

Large and Established Community

TensorFlow boasts a vast and well-established community. Having been around for longer than PyTorch, it translates to abundant support, tutorials, documentation, and existing code projects. Beyond that, its prevalent use in enterprise products and solutions means a large pool of skilled professionals. These developers possess production-ready experience. It also signifies a robust support ecosystem. Therefore, when opting for TensorFlow, you leverage years of accumulated knowledge. You also gain access to proven solutions that have been rigorously tested in numerous real-world scenarios. This is a critical consideration when making a framework decision between PyTorch vs TensorFlow.

Who Uses What? Adoption Trends in Deep Learning Frameworks

Understanding the technical distinctions is one aspect. However, observing the real-world adoption of these frameworks provides invaluable context for the PyTorch vs TensorFlow debate. Indeed, these trends reveal distinct preferences across various domains. This helps elucidate each framework’s unique strengths and perceived value.

Adoption Trends in Research and Academia

When it comes to cutting-edge research and advancing AI, PyTorch has emerged as the clear leader. Data from recent years offers compelling evidence:

- For example, in 2023, approximately 80% of papers presented at NeurIPS (Conference on Neural Information Processing Systems), a premier AI research conference, declared their framework choice. The vast majority utilized PyTorch.

- Furthermore, other sources indicate that over 75% of newly published deep learning research papers now favor PyTorch.

This research dominance stems directly from PyTorch’s inherent flexibility, ease of iteration, and Pythonic feel. In particular, researchers frequently experiment with novel model architectures. They also require rapid debugging capabilities. This makes PyTorch’s dynamic graph an ideal fit. In other words, its capacity for swift ideation and model refinement without extensive re-coding is paramount. This is especially true in an environment where innovation is paramount. Ultimately, for students and academic faculty, learning PyTorch often means engaging with the latest advancements in the field. This further solidifies its position in the PyTorch vs TensorFlow discussion for research.

Industry Adoption by Enterprises and Startups

While PyTorch clearly excels in research, conversely, the industrial landscape presents a more competitive struggle between deep learning frameworks. TensorFlow, with its robust production-focused ecosystem, maintains a formidable presence. However, PyTorch is rapidly gaining traction.

According to the 2023 Stack Overflow Developer Survey:

- 8.41% of professional developers reported using TensorFlow.

- 7.89% reported using PyTorch.

PyTorch’s Accelerating Industry Adoption

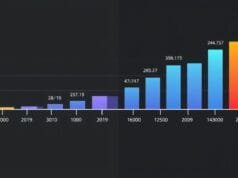

This data clearly indicates that TensorFlow still holds a slight lead in overall developer usage. However, PyTorch’s growth trajectory is remarkable. Notably, its adoption among professional developers surged significantly, from just 4.1% in 2020 to 7.89% in 2023. This rapid increase, therefore, signifies that PyTorch is no longer solely a research tool. Rather, it is increasingly being employed for real-world applications.

Predictions for Q3 2025 also highlight PyTorch’s burgeoning influence. They suggest it will lead in research community adoption and capture approximately 55% of the production market, particularly in regions like North America and Europe. TensorFlow, conversely, continues to maintain a strong presence in enterprise environments. This is particularly true where existing systems are built around its ecosystem. Thus, the competition between these deep learning frameworks remains dynamic within the industry. This makes the PyTorch vs TensorFlow choice a strategic one.

Real-World Applications in Practice

To truly grasp the impact of these frameworks, it’s insightful to examine the companies that leverage them. For example, their choices often underscore the frameworks’ core strengths. Additionally, they illustrate how well they align with specific business requirements. Ultimately, this provides real-world insights into the broader framework discourse surrounding PyTorch vs TensorFlow.

Here’s a quick look at well-known companies and their preferred frameworks, highlighting their strategic PyTorch vs TensorFlow decisions:

| Framework | Companies | Typical Applications |

|---|---|---|

| TensorFlow | Google, Airbnb, Airbus, Coca-Cola, GE Healthcare, Intel, JPMorgan Chase & Co., Capital One, Apple, Walmart, Meta Platforms | Image Classification, Object Detection, Anomaly Detection, Recommendation Systems, Predictive Maintenance, Fraud Detection, Autonomous Vehicles |

| PyTorch | Meta, Amazon Advertising, Salesforce, Stanford University, various IT/Services SMEs (10-50 employees, $1M-$10M revenue) | Research & Development, Advanced NLP, Computer Vision Research, Generative AI, Personalized Advertising, Drug Discovery |

The table above clearly illustrates a distinct trend in framework adoption. Large enterprises often possess extensive, complex, and global operational requirements. They also demand robust deployment capabilities and comprehensive MLOps ecosystems. Such companies frequently gravitate towards TensorFlow. This is primarily because its ecosystem for model serving, hardware optimization, and end-to-end process management is highly appealing for these large organizations. This positions it prominently in the PyTorch vs TensorFlow arena.

Strategic Framework Choices in Industry

Conversely, PyTorch sees extensive use in companies that prioritize rapid innovation, cutting-edge research, and agile development. For example, Meta (formerly Facebook), one of its primary creators, naturally employs it extensively across its products. Moreover, small to mid-size enterprises (SMEs) in IT and Services find PyTorch’s ease of use and flexibility ideal for developing custom AI solutions and maintaining agility. Stanford University’s usage further underscores its strong ties to academia. Therefore, understanding these applications is crucial for a comprehensive PyTorch vs TensorFlow comparison.

Logos of major companies like Google, Meta, Amazon, Salesforce, Capital One, and Airbnb, visually grouped by their primary framework preference.

Ultimately, the framework choice often reflects a company’s primary objectives. For instance, is the focus primarily on novel research and rapid development? Or is it centered on deploying highly robust, scalable solutions for millions of users? Clearly, both frameworks excel in their respective domains. Thus, this demonstrates that there isn’t a single “best” option in this comparison. Instead, it’s more about identifying the “best fit” when considering PyTorch vs TensorFlow for specific projects.

Under the Hood: Performance in the PyTorch vs TensorFlow Comparison

Beyond developer adoption and tool robustness, however, a framework’s performance and resource utilization are critically important. This is especially true for training large deep learning models. These factors directly impact training time, computing costs, and project feasibility. While both PyTorch and TensorFlow are engineered for high performance, subtle differences can emerge. Indeed, this depends on the specific task and hardware configuration. This can ultimately influence the framework chosen within the PyTorch vs TensorFlow debate.

PyTorch vs TensorFlow: Training Time and Model Speed

When it comes to model training speed, benchmark tests often yield varying results. For example, these differences primarily depend on model complexity, data size, and the hardware utilized.

- Smaller Models: For smaller models or initial experiments, PyTorch can occasionally train faster. This is often attributed to less overhead associated with its dynamic graph construction. The immediate execution, consequently, can save time compared to the parsing and compilation steps TensorFlow traditionally required, even with eager execution now largely bridging the gap between PyTorch and TensorFlow.

- Larger Models and Longer Runtimes: For exceptionally large and complex models trained over extended periods, TensorFlow can exhibit superior GPU utilization and memory efficiency. This is particularly evident when leveraging its static graph features (via `tf.function`). The ability to optimize the entire graph prior to execution, in essence, allows TensorFlow to achieve holistic speed improvements. These might not be attainable solely with a dynamic approach. This is a key differentiator in the performance discussion of PyTorch vs TensorFlow.

- Hardware Specifics: Some studies suggest that PyTorch might train faster on GPUs for certain tasks due to its optimized CUDA integration. Conversely, TensorFlow can sometimes be quicker on CPUs for specific mathematical operations. This is thanks to its highly optimized C++ codebase.

Performance Factors in Model Training

It’s important to remember, however, that these are general observations regarding performance. Moreover, real-world performance can be influenced by numerous factors, including:

- Specific Model Architecture: Certain architectures might naturally align better with one framework’s optimization strategies.

- Data Pipeline Efficiency: The efficacy of data loading and preparation can significantly impact total training time. This is often more impactful than inherent framework differences in PyTorch vs TensorFlow.

- Furthermore, Hardware Configuration: The type of GPU, CPU, and available memory all play a pivotal role.

PyTorch vs TensorFlow: Memory Footprint and Efficiency

Memory consumption during training is another critical metric, especially when working with extremely large models or constrained GPU memory. Excessive memory usage can lead to out-of-memory errors. As a result, this compels developers to resort to smaller batch sizes or less efficient training methodologies.

TensorFlow often exhibits lower memory consumption during training compared to PyTorch, particularly for larger and more complex models. This efficiency, therefore, primarily stems from its advanced graph optimization and meticulous memory management techniques. For example, a frequently cited benchmark demonstrated TensorFlow utilizing approximately 1.7 GB of RAM during training for a specific model. PyTorch, conversely, consumed around 3.5 GB of RAM for the identical task. Clearly, this significant disparity is a major point in the PyTorch vs TensorFlow comparison for resource-constrained systems.

Factors Behind Memory Efficiency

This disparity can be attributed to:

- Static Graph Optimizations: When TensorFlow compiles a static graph, it can analyze the entire computational process. Thus, it can make global decisions regarding memory allocation and deallocation. This minimizes peak memory usage.

- Memory Fragmentation: Dynamic graphs, by their very nature, can occasionally lead to increased memory fragmentation. This occurs as operations are dynamically added and removed. While PyTorch has made commendable progress in this area, the fundamental difference in graph construction can still manifest as distinct memory patterns in a PyTorch vs TensorFlow comparison.

For projects where memory is a critical constraint, such as training extremely deep networks or utilizing GPUs with limited VRAM, TensorFlow’s efficiency can provide a distinct edge when choosing between PyTorch vs TensorFlow.

Model Accuracy: A Level Playing Field

One of the most reassuring findings for users is that, generally speaking, both frameworks yield very similar accuracy for identical models. In particular, when employing the same neural network architecture and training it with identical data and hyperparameters, you can expect the final model accuracy to be virtually indistinguishable. This holds true irrespective of whether you used PyTorch or TensorFlow.

This consistency arises because the underlying mathematical operations and optimization algorithms are standardized across deep learning. Consequently, both frameworks correctly implement these fundamental components. Therefore, the choice between PyTorch vs TensorFlow does not typically necessitate a trade-off in your model’s predictive performance. Instead, it revolves around the development experience, community support, suitability for specific tasks, and deployment capabilities. Ultimately, this allows developers to concentrate on model design and data quality. They do not have to concern themselves with accuracy discrepancies attributable to the framework itself.

A bar chart visually comparing memory usage (e.g., in GB) of PyTorch and TensorFlow for a typical deep learning model during training, showing TensorFlow with a lower bar.

Making the Right Choice: PyTorch vs TensorFlow for Your Project

Deciding between PyTorch and TensorFlow isn’t about declaring one universally superior. Instead, it’s about aligning the framework’s strengths with your project’s precise requirements and your team’s operational paradigm. Indeed, both are incredibly powerful. Therefore, a clear understanding of your specific needs will guide you toward the optimal choice in the PyTorch vs TensorFlow debate.

PyTorch vs TensorFlow: When to Lean Towards PyTorch

You might find PyTorch to be your ideal partner if your project or working style aligns with these characteristics:

PyTorch for Research and Development

- Research and Rapid Prototyping: If your work involves extensive experimentation, novel model architectures, or swift validation of new concepts, PyTorch’s flexibility and dynamic graphs will significantly accelerate your iteration cycles. Its ease of debugging is a tremendous benefit here. Consequently, this makes PyTorch a compelling choice for researchers in the PyTorch vs TensorFlow landscape.

Ease of Adoption for New Projects

- New or Academic Projects: Moreover, for students, academic faculty, or anyone embarking on a new project with a focus on discovery, PyTorch’s intuitive and Pythonic design can make the learning curve smoother and development more enjoyable. Simply put, this lowers the initial barrier to entry, a key factor in the PyTorch vs TensorFlow decision for beginners.

Agility and Pythonic Integration

- Smaller Teams and Agility: Teams that prioritize speed, agile development, and the capacity for rapid model adaptation will appreciate PyTorch’s flexibility.

- Strong Python Familiarity: Additionally, if you are highly proficient with Python and its debugging tools, PyTorch’s seamless integration with these will feel natural and exceptionally productive.

Leading in Emerging AI Domains

- Cutting-Edge Fields: PyTorch is a leading platform for research, especially in domains like natural language processing and generative AI. Consequently, many of the latest models and tools in these fields are often first released and best supported in PyTorch. This influences the PyTorch vs TensorFlow choice for innovators.

Consider PyTorch if your focus is on discovery, novel concepts, and an iterative development process. In other words, it empowers you to build and modify models with less boilerplate code and greater creative freedom within the PyTorch vs TensorFlow comparison.

PyTorch vs TensorFlow: When TensorFlow Shines Brightest

TensorFlow, with its robust suite of tools and enterprise-grade features, stands out as the stronger choice for projects with distinct priorities. Indeed, this firmly establishes it in the PyTorch vs TensorFlow conversation for production environments.

TensorFlow for Large-Scale Production

- Large-Scale Production Deployments: If your primary objective is to deploy machine learning models for millions of users in a stable, scalable, and easily manageable manner, TensorFlow’s comprehensive MLOps ecosystem (TensorFlow Serving, Lite, TFX) is unparalleled. It is engineered specifically for reliable, large-scale operations. Therefore, this firmly positions it as the preferred option in the PyTorch vs TensorFlow discussion for production environments.

Versatility Across Platforms and Devices

- Cross-Platform and Edge Device Deployment: Moreover, for deploying models on mobile devices (iOS, Android), embedded systems, web browsers, or specialized hardware like TPUs, TensorFlow offers tailored tools (TensorFlow Lite, TensorFlow.js). These tools simplify these complex tasks. Hence, it provides robust cross-platform capabilities. This is a significant factor in the PyTorch vs TensorFlow selection for diverse deployment targets.

TensorFlow’s Key Strengths

Suitability for Enterprise Environments

- Enterprise Environments: Large organizations typically possess established systems. They have stringent performance requirements. They also demand extensive monitoring and version control. Consequently, TensorFlow’s well-developed tooling and standardized workflows are well-suited for their ambitious AI initiatives. This often makes it the go-to choice in PyTorch vs TensorFlow decisions for corporate adoption.

High Performance for Critical Workloads

- Performance-Critical Applications: Furthermore, TensorFlow’s optimization capabilities provide a significant advantage. This is true when peak performance and efficient resource utilization are paramount. This is especially true for exceptionally large models or when leveraging TPUs. It further highlights the distinct performance characteristics of PyTorch vs TensorFlow.

Broad Skillset and Team Integration

- Diverse Team Skillsets: TensorFlow’s broader adoption in commercial settings translates to a larger talent pool of skilled professionals. These developers possess production-ready experience. Its ecosystem supports collaborative workflows across diverse teams. This might involve multiple teams (e.g., ML engineers, DevOps, mobile developers).

TensorFlow’s Production-Ready Advantages

Choose TensorFlow when your project demands industrial robustness, comprehensive deployment processes, and optimal performance across numerous target systems. In short, it provides an end-to-end solution from experimentation to full deployment. It meets specific needs in the PyTorch vs TensorFlow comparison for robust solutions.

A clear, simple flowchart or decision tree helping users choose between PyTorch and TensorFlow based on project needs like “Is it for research?” or “Is it for production deployment?”

The Evolving Landscape: Framework Convergence

The intense competition between PyTorch and TensorFlow has been a powerful catalyst for innovation. This rivalry continually compels both frameworks to evolve and incorporate each other’s strengths. Consequently, many traditional distinctions are blurring due to their ongoing convergence. Indeed, the lines between their foundational philosophies are therefore dissolving in the PyTorch vs TensorFlow debate.

TensorFlow, for instance, with its eager execution, has embraced the flexible, imperative coding style popularized by PyTorch. This, in turn, makes it far more user-friendly for rapid prototyping and debugging than its older iterations. Conversely, PyTorch has been steadily enhancing its production readiness features. For example, it introduced features like TorchScript for model serialization and optimization. This makes it more viable for large-scale deployments. This further blurs the distinctions in the PyTorch vs TensorFlow comparison.

Shared Development Focuses

Both frameworks are actively investing effort into:

- Enhanced Ease of Use: Simplifying APIs and documentation to lower the barrier to entry for machine learning.

- Optimizing Hardware Utilization: Furthermore, improving performance and efficiency across a wider range of hardware, including GPUs, TPUs, and specialized AI accelerators.

- On-Device AI: Developing tools and methodologies for running machine learning models efficiently on resource-constrained devices. This enables novel applications in IoT, robotics, and mobile technology.

- Automated Machine Learning (AutoML): Additionally, incorporating automated processes for model selection, hyperparameter tuning, and neural architecture search. This streamlines the development process even further.

The PyTorch vs TensorFlow Convergence Trend

This ongoing evolution suggests that the choice between PyTorch vs TensorFlow will depend less on fundamental feature sets. Instead, it will hinge more on individual developer preference. Furthermore, it will also depend on the maturity of their respective tooling for specific use cases, and on existing team proficiencies. Ultimately, the trend points towards a future where both frameworks coexist harmoniously. Each will excel in its niche while offering a robust set of shared capabilities.

For any aspiring or seasoned AI practitioner, this convergence leads to a simple but profound piece of advice: it is highly beneficial to learn both frameworks. Essentially, understanding both PyTorch and TensorFlow not only fosters versatility as a developer. Rather, it also provides a deeper comprehension of the foundational principles of deep learning. Furthermore, proficiency in both allows you to select the optimal tool for each specific task. It also enables adaptation to various team setups and staying abreast of a rapidly evolving field. In conclusion, the PyTorch vs TensorFlow discussion is less about identifying a clear winner. It’s more about making informed, strategic choices.

An abstract representation of two initially separate paths, labeled “PyTorch” and “TensorFlow,” gradually converging and merging into a single, wider future path representing advanced AI development.

Beyond the Code: Cultivating Your Machine Learning Journey

While selecting the right framework is undoubtedly a crucial decision, it’s important to remember that frameworks are merely tools. Ultimately, the true mastery in machine learning lies in understanding the core concepts, the underlying mathematics, the data itself, and the specific problem you’re aiming to solve. Moreover, an excessive focus on framework-specific code can sometimes obscure the fundamental principles that underpin AI’s power. Indeed, the PyTorch vs TensorFlow debate, while significant, should not eclipse this overarching truth.

Instead, cultivate a questioning mindset, embrace continuous experimentation, and actively participate in the vibrant machine learning community. For example, share your insights, learn from others’ experiences, and contribute to the collective knowledge. Ultimately, whether you’re building a novel research model with PyTorch or deploying a mission-critical AI system with TensorFlow, your machine learning journey is fundamentally about continuous learning and innovation. Therefore, embrace the challenge, savor the process, and let these powerful frameworks augment your creativity.

As you embark on your machine learning projects, which framework’s philosophy best aligns with your approach, and why, in the ongoing PyTorch vs TensorFlow discussion?